Galaxy S5 LTE-A: Battery Life, Performance

by Joshua Ho on August 5, 2014 8:00 AM EST

While I was planning on doing a full review for the GS5 LTE-A, it turns out that there’s relatively little that changes between the original Galaxy S5 and this Snapdragon 805 version. For those that aren’t quite familiar with this variant of the Galaxy S5, most of the device stays the same in terms of design, battery size, waterproofing, UI, and camera. What does change are the SoC, modem, and display. The SoC is largely similar to the one we see in the original Galaxy S5, but a minor update to CPU (Krait 450 vs Krait 400), more memory bandwidth, faster GPU, and faster ISP. In addition, the modem goes from category 4 LTE support on a 28HPm process to category 6 LTE support on TSMC’s 20nm SoC process. What this means is that the maximum data rate goes from 150 Mbps to 300 Mbps. Finally, the display goes from 1080p to 1440p in resolution, and we've previously covered the display's surprisingly good calibration and general characteristics.

Battery Life

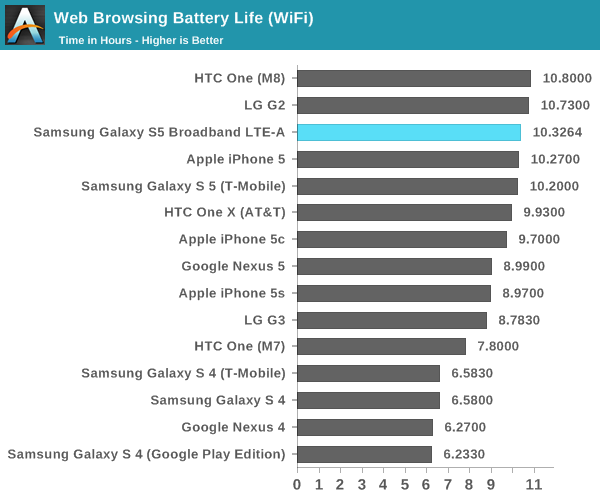

Of course, one of the major questions has been whether battery life has been compromised by the higher resolution display or more powerful GPU. To answer these questions, we turn to our standard suite of tests, which include web browsing battery life and battery life under intensive load. In all cases where the test has the display on, the display is calibrated to 200 nits.

In this test, we see that the GS5 LTE-A is ever so slightly better than the Galaxy S5 in WiFi web browsing, which is quite a stunning result. However, the result is within the margin of error for this test, which is no more than 1-2%. This result is quite surprising, especially because we saw how the move to QHD significantly reduced battery life on the LG G3 compared to the competition. Samsung states that there is an improved emitter material in the OLED display, which is probably responsible for the relatively low impact of the QHD display. This kind of power efficiency improvement is due to the relatively immature state of OLED emitter material technology when compared to LED backlight technology.

The other area where power improvements could come from is the WiFi chip itself. Instead of Broadcom's BCM4354, we see a Qualcomm Atheros QCA6174 solution in this variant. Both support 2x2 802.11ac WiFi for a maximum throughput of 866 Mbps, but there's a possibility that the QCA6174 solution is more power efficient than the BCM4354 we found in the original Galaxy S5.

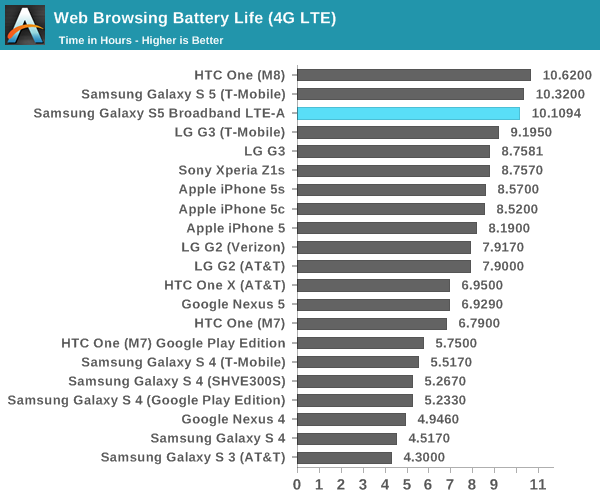

In the LTE web browsing test, we see a similar story as once again the Galaxy S5 LTE-A is within the margin of error for our test. As mentioned before, improvements to the display could reduce the effect that higher DPI has on power consumption. The other element here that could reduce power consumption would be the MDM9x35 modem, which is built on a 20nm SoC process for lower power consumption.

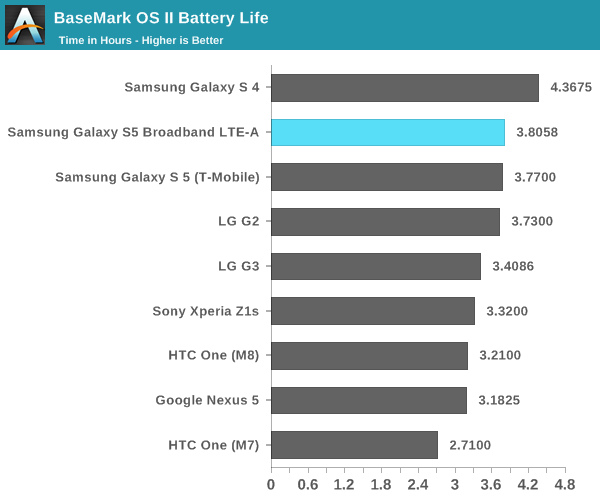

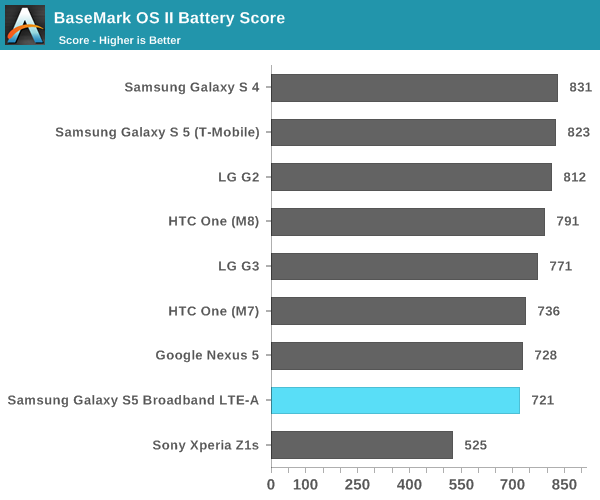

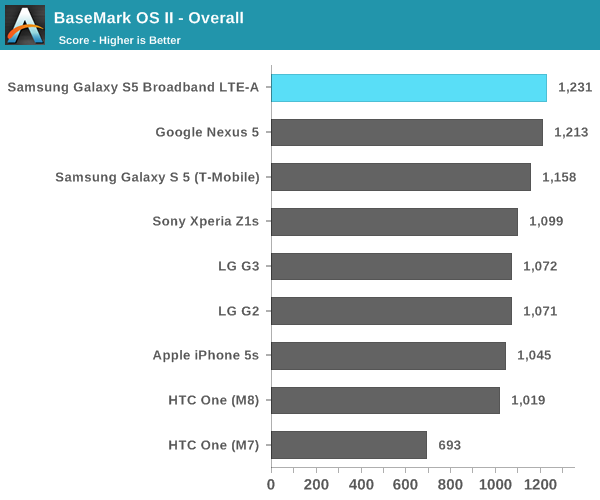

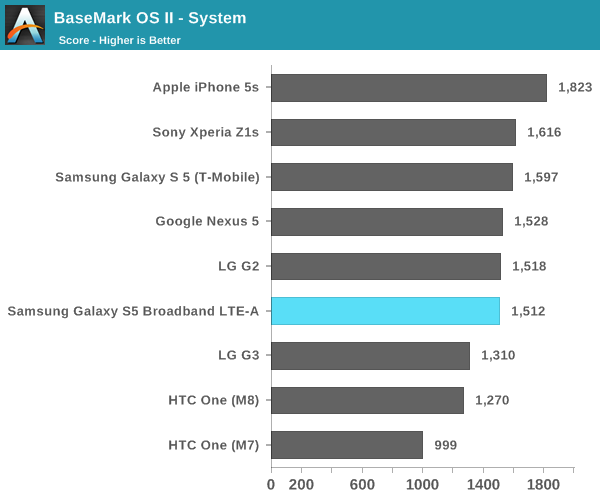

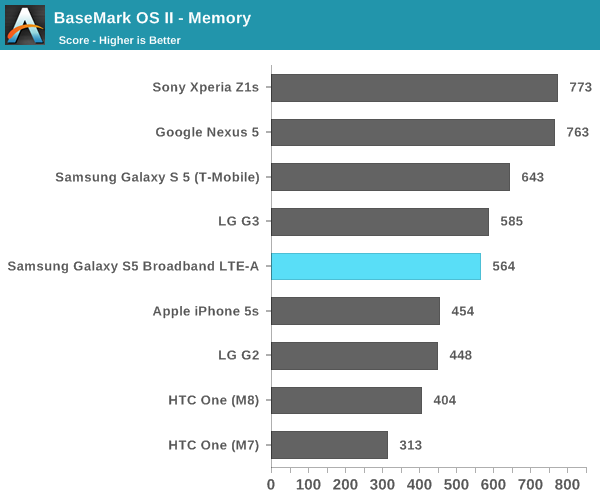

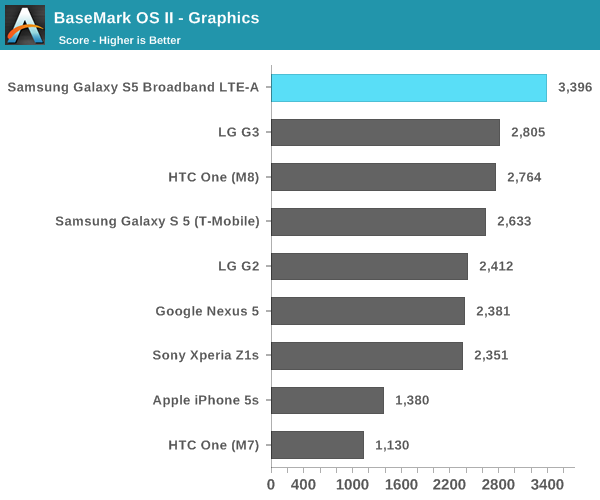

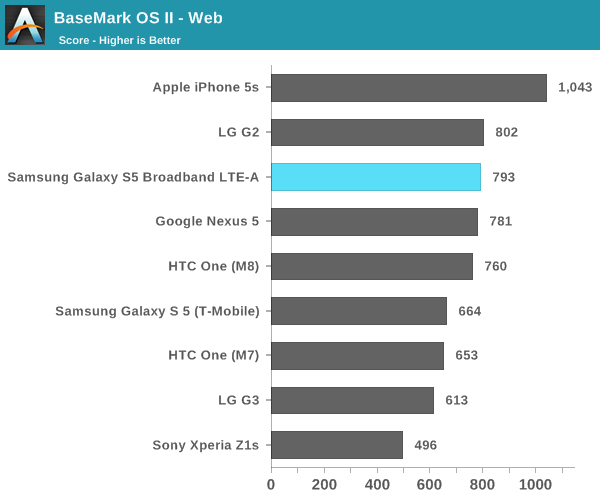

In order to better show the effects of stressing the CPU, GPU, NAND, and RAM subsystems we turn to our compute-intensive tests. For Basemark OS II, once again we see that battery life on the Galaxy S5 LTE-A is effectively identical to the original Galaxy S5. However, it seems that this comes at the cost of worse performance, which is likely the result of running at a higher resolution and higher ambient temperatures.

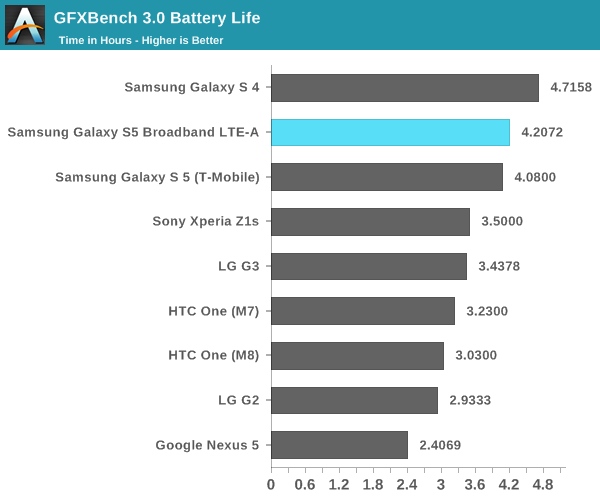

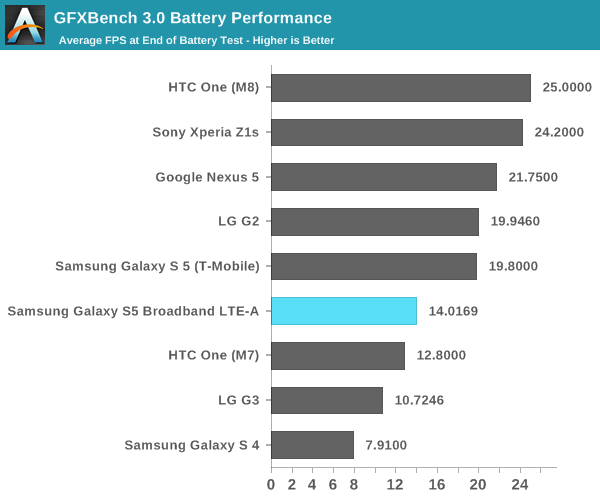

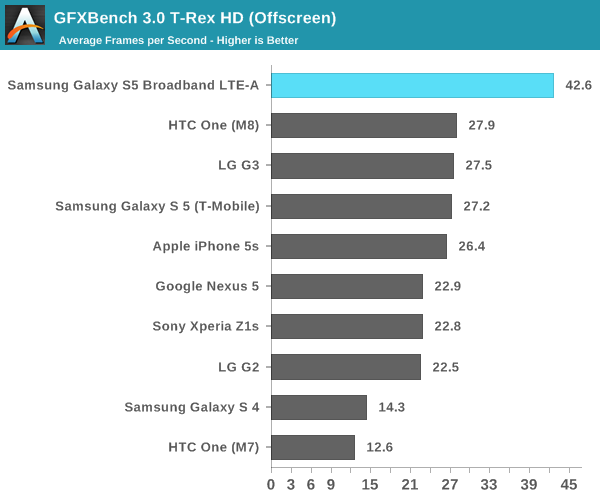

While Basemark OS II seems to mostly simulate intensive conventional usage of a smartphone, GFXBench is a reasonably close approximation of intensive 3D gaming. Surprisingly, we see here that the GS5 LTE-A is ahead of the GS5. It seems that the GPU architecture of the Adreno 420 is more efficient than what we saw in the Adreno 330. Once we scale the final FPS by resolution, we see that the end of run performance is around 22 FPS, which means that performance ends up being a bit higher than the original Galaxy S5. This seems to suggest a more efficient GPU architecture.

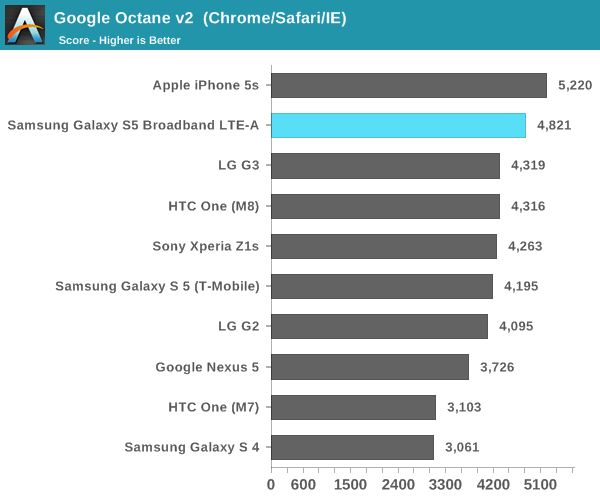

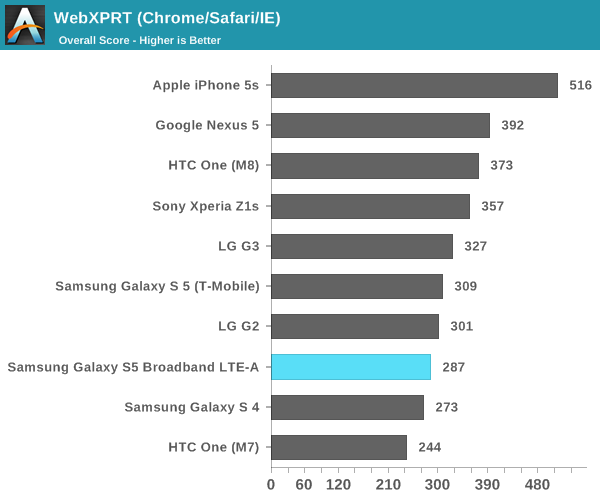

CPU Performance

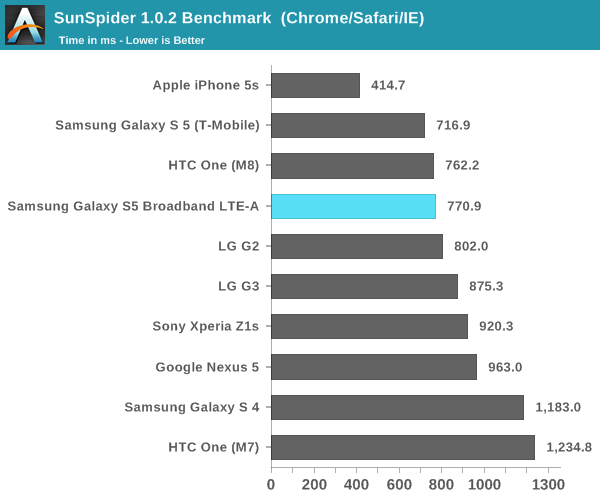

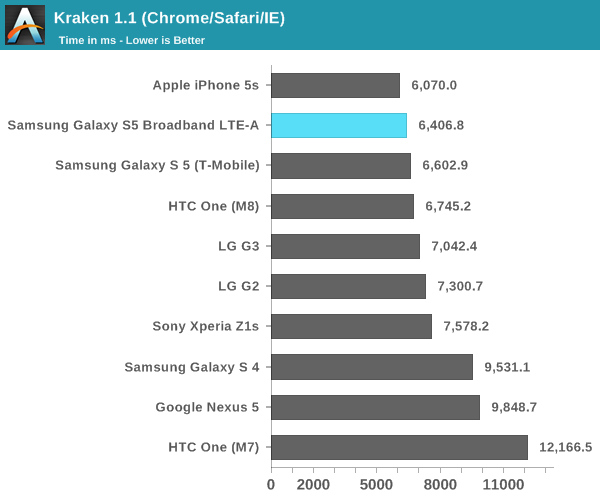

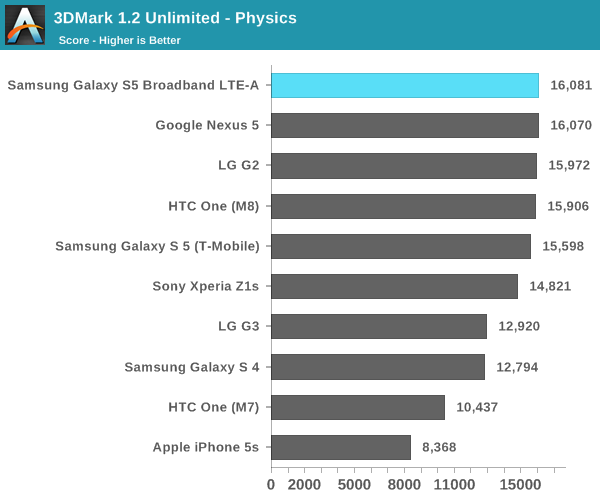

While sustained performance is important, more often than not mobile use cases end up being quite bursty in nature. After all, a phone that lasts "all day" usually only has its screen on for 4-6 hours of a 16 hour day. To get a better idea of performance in these situations, we turn to our more conventional benchmarks. For the most part, I wouldn't expect much improvement here. While it's true that we're looking a new revision of Krait, it seems to be mostly bugfixes and various other small tweaks, not any significant IPC increase.

As seen in the CPU-bound tests, the GS5 LTE-A tends to trade blows with other Snapdragon 801 devices. The only real area where we can see a noticeable lead is the graphics test in Basemark OS II, which gives us a good idea of what to expect for the GPU-bound tests.

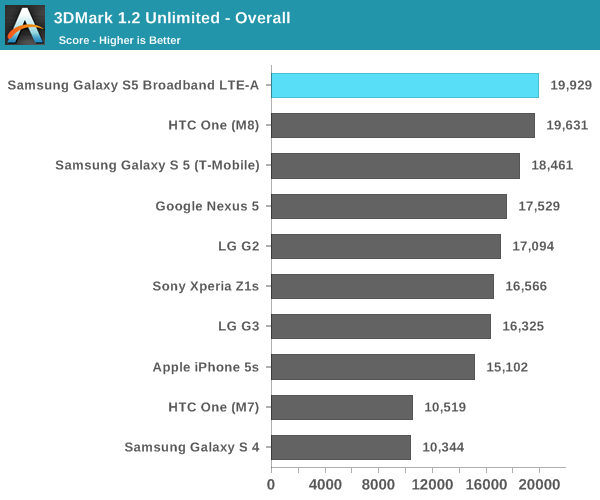

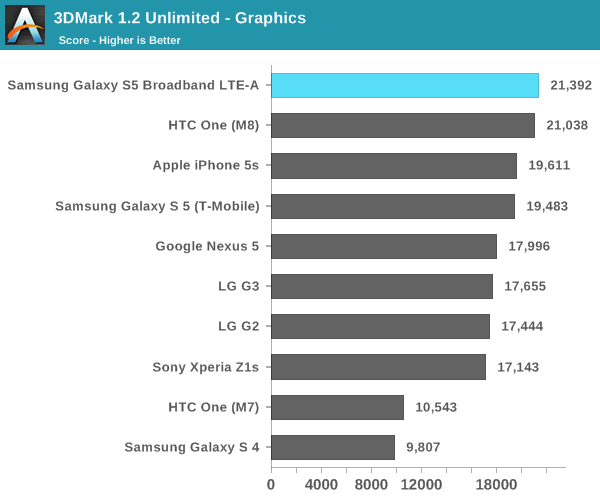

GPU Benchmarks

If there's any one improvement that matters the most with Snapdragon 805, it's in graphics. While there is a minor clock bump from 578 MHz to 600 MHz in the Adreno 420, this accounts only accounts for a 3.9% bump in performance if performance per clock is identical when comparing the Adreno 330 and Adreno 420. For those unfamiliar with the Adreno 420, this is a new GPU architecture that brings Open GL ES 3.1 support, along with support for ASTC texture compression and tesselation. As a result, the Adreno 420 should be able to support much better graphics in video games and similar workloads, along with better performance overall when supporting a higher 1440p resolution display when compared to Adreno 330. While we saw how Adreno 420 performed in Qualcomm's developer tablet, this is the first shipping implementation of Adreno 420 so it's well worth another look.

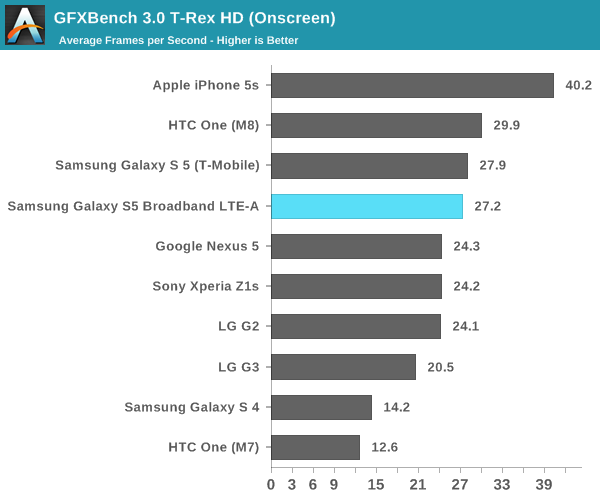

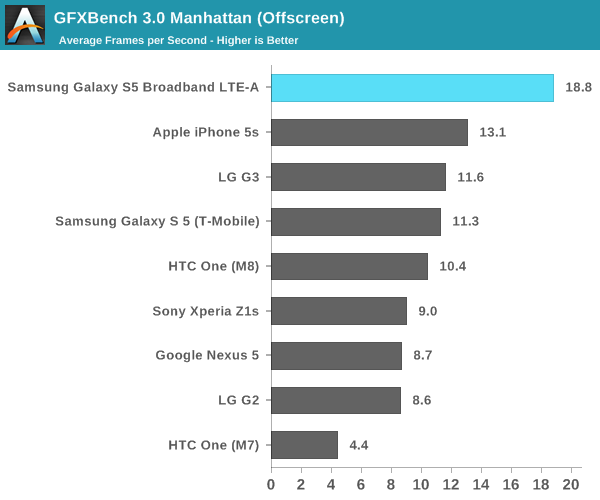

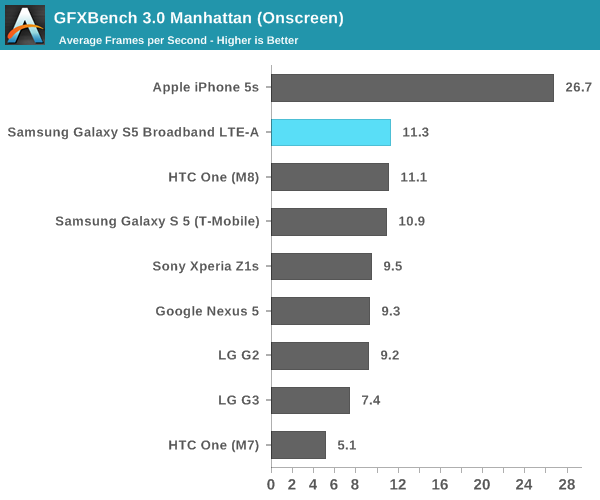

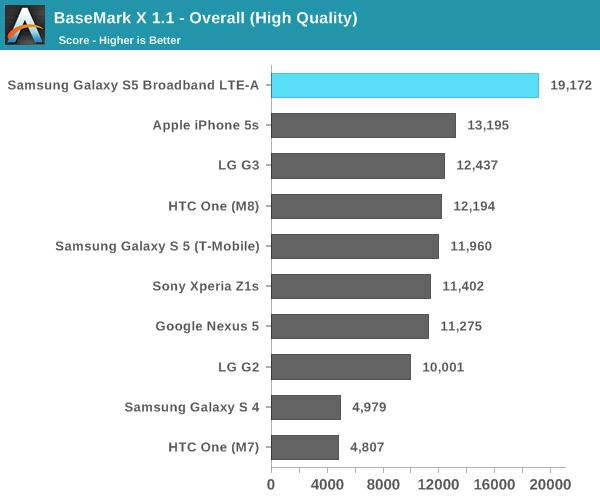

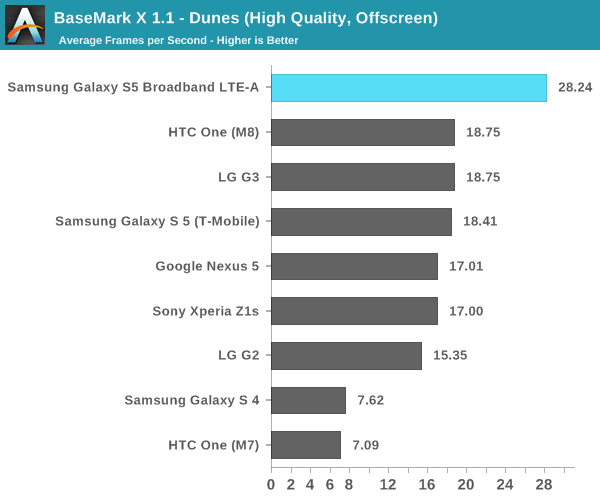

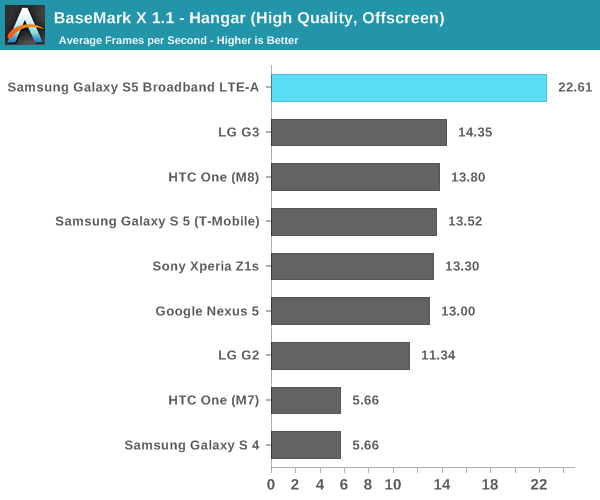

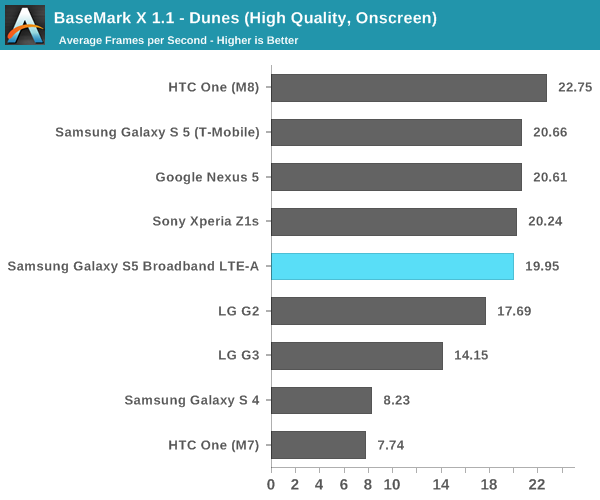

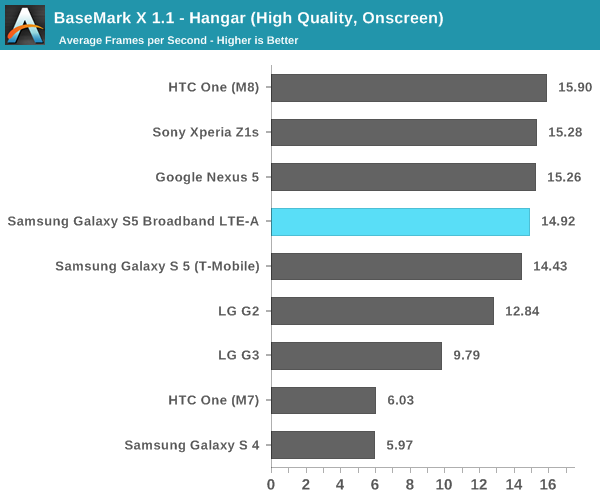

Here, we see a general trend that in the off-screen benchmarks, the Adreno 420 has a solid lead over other smartphones. Against the Adreno 330, we see around a 50-60% performance increase. However, in the on-screen benchmarks the GS5 LTE-A is solidly middle of the pack. It seems that we will have to wait until Adreno 430 and Snapdragon 810 to see on-screen GPU performance improve if the display has a 1440p resolution.

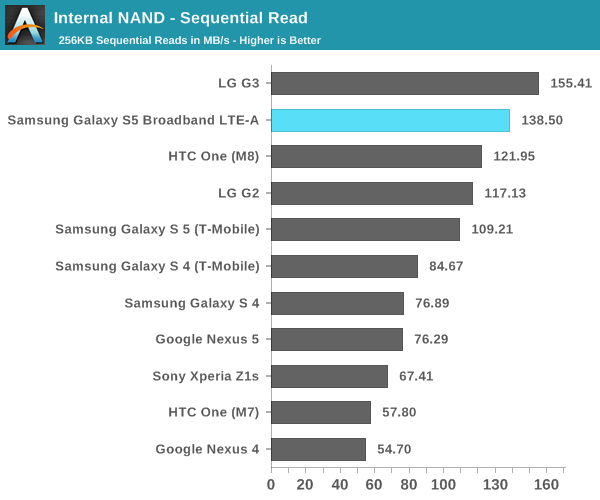

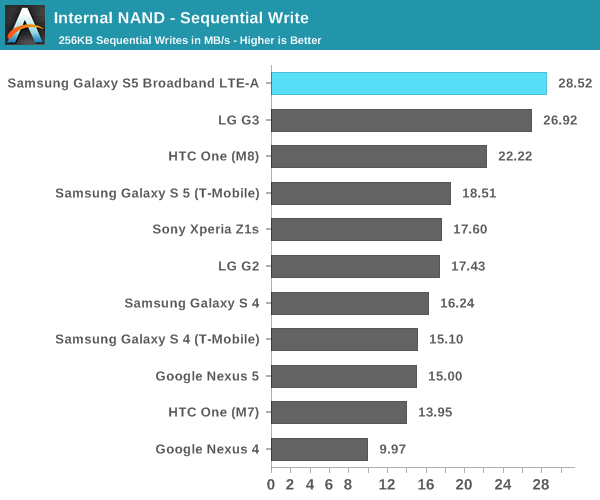

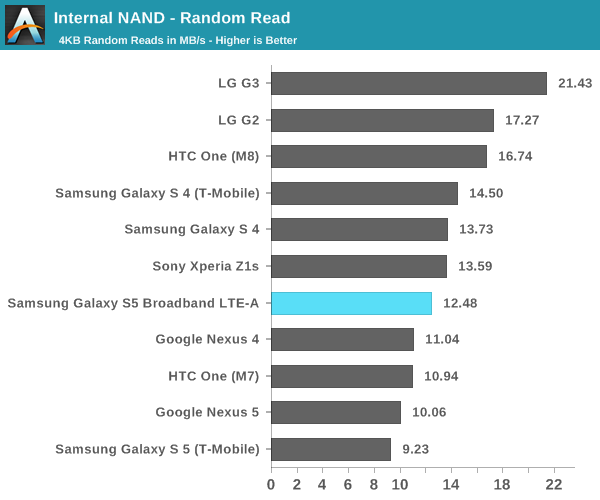

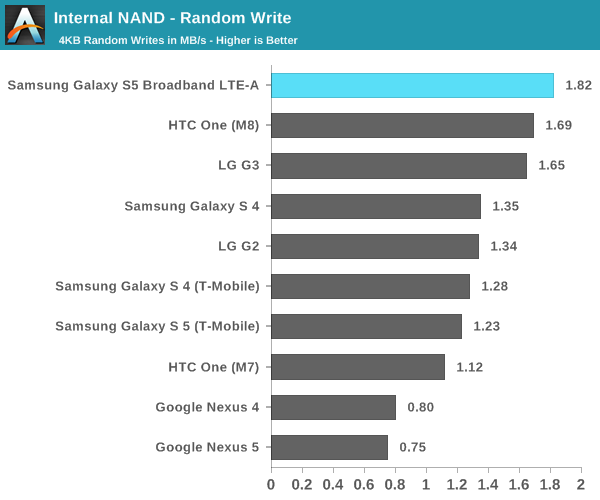

NAND Performance

Since we're still on the subject of the GS5 LTE-A's performance, I wanted to make a note of something else that changed. It seems that Samsung has put higher performance NAND into this device as well, as there's a noticeable uplift in read and write speed, regardless of whether it's sequential or random.

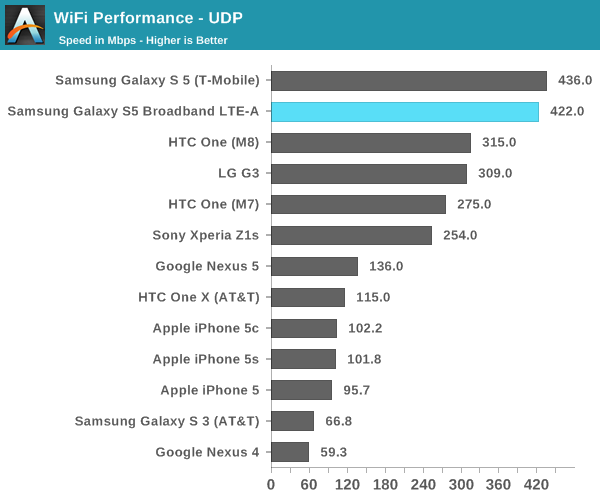

WiFi Performance

While at first I thought that the GS5 LTE-A would use the Broadcom BCM4354 chipset for 2x2 802.11ac WiFi, it turns out that this wasn't the case at all. As I mentioned earlier, this is the first shipping implementation of Qualcomm Atheros' QCA6174 WiFi chip. To see whether it's any faster, I used iperf and an Asus RT-AC68U router to try and see what peak UDP throughput is like.

As we can see here, it's effectively neck and neck and quite hard to tell the two apart. Both Galaxy S5s are far ahead of their single stream competition, but we see that two spatial streams only delivers around a 36% increase in performance.

Final Words

While there's been a lot of discussion over the impact of 1440p displays on battery life and performance, it seems that Samsung has managed to make the move to 1440p without losing performance or battery life for the most part. Of course, there's always the question of how much better battery life could be by utilizing the same technologies for a 1080p panel. This ends up going back to the question of whether it's possible to notice the higher resolution. To some extent, the answer is yes, and it becomes much more noticeable when used for VR purposes. However, in normal usage it's not immediately noticeable unless one has good or even great vision.

Outside of conventional battery life and performance tests, there are still a few more things to cover. I've noticed that there's a new Sony IMX240 camera sensor in the GS5 LTE-A, and I've been working on a more in-depth look at the MDM9x35 modem. Overall, we can see that this is a relatively straight upgrade of the original Galaxy S5. This will also give us a good idea of what to expect for future high-end Samsung devices launching in the next few months.

32 Comments

View All Comments

repoman27 - Tuesday, August 5, 2014 - link

Any chance you'll instrument the GS5 LTE-A to measure power consumption directly as Anand did with the Galaxy S 5 (T-Mobile) and GS4 (AT&T)? I'm curious to see the difference in device power at idle with the screen on, to see how much of the battery life parity is due to overall platform power reduction or just the relative efficiency of the screen itself given the resolution.mike55 - Tuesday, August 5, 2014 - link

I would think a resolution increase on an OLED panel wouldn't have as much of an increase on power consumption as a comparable increase in resolution on an LCD due to the emissive nature of the technology. On an LCD with shrinking pixels, it's blocking more light from the backlight, so the backlight needs more power to achieve the same brightness. On an OLED you have more of the smaller pixels that have a similar luminous efficiency as the old larger pixels. I suppose the only power losses would be from higher resistance from smaller wires controlling the pixels and perhaps some other small power increases I'm not considering. Of course there's still the same increase in processing power needed to drive more pixels.lilmoe - Tuesday, August 5, 2014 - link

I'd prefer they make improvements to 1080p on a ~5" screen than move to a higher resolution panel. I just don't see the need for higher. The cons far outweigh the pros IMHO. Samsung can build a 1080 AMOLED panel with near-RGB strip arrangement (like in the Note 2). The clarity of the screen would drastically improve there, without needing to strain the internals. Not only does a higher resolution demand more CPU and GPU horsepower, but also bandwidth and more memory (and more internet bandwidth if the services are DPI aware). I'd rather gain considerably more performance and battery life with each iteration of a smartphone than utilizing that improvement in powering gimmicky features with diminishing returns. SoCs have improved so much in the past couple of years yet we slightly felt any difference with each iteration just because those SoCs are simply more strained than former ones. It's such a waste. I can get even more technical about this, but I'm tired of explaining myself.Really, 1080 is not only excellent, but absolutely amazing and mind blowing on a screen that size. Lets perfect what we have first before moving to the next (unneeded) level, sigh. Apple got away with 640p for more than 4 plus who knows how many more in the future. They took their time to perfect their internals to work with that resolution and DPI to the point that their smartphone is stupidly fluid. I see absolutely no reason why Samsung wouldn't get away with doing the same. Mind you, the panel on the S5 is leaps and bounds better than the on any iPhone.

Trust me people. Standardizing 1080 for smartphones would do a HUGE favor for the industry and the ecosystem. Having less things to worry about would make SoC manufactures concentrate more on what counts, not just optimizing the next big SoC to drive even more pixels...

repoman27 - Tuesday, August 5, 2014 - link

In what way does retarding the development of one particular component help the industry as a whole move forward? Screen resolution has doubled roughly 3 times in the past 20 years, while mobile SoC GPUs have doubled in performance almost 9 times in 7 years. In many ways, screen resolution has been waiting around for better GPUs; why take the pressure off now that we can finally drive these displays?lilmoe - Tuesday, August 5, 2014 - link

Because we've reached a point where more isn't necessarily better in particular components, and improvements need to be made in others. Current mobile GPUs don't even play 1080p games at 60fps with decent efficiency, and only a handful can tell a difference between of text or even video on a 1080p VS 1440p on a 5.x" screen. Why the heck would you improve certain components when you can barely tell the difference? Would you rather have a 1440p smartphone that runs OK or a 1080p smartphone that runs exceptionally well? 1080p on a 5" screen already looks superb, mind you. We're not in dire need of a higher resolution.When hardware can effortlessly and efficiently run EVERYTHING at 1080p and still has change to spare, then think of upgrading, otherwise I believe we're still not at that point yet. There's still lots of room of improvement for 1080p on a mobile small screen.

"In what way does retarding the development of one particular component help the industry as a whole move forward?"

I believe "retarding: is an excessive way to put it. You speak as if current 1080p screens are absolutely perfect. They're not. There's lots of room for improvement in various aspects.

repoman27 - Wednesday, August 6, 2014 - link

We're at 600 ppi right now, after stagnating at 90-150 ppi for over a decade. If we can double twice more, we'll be at 2400 ppi and will have satisfied the Nyquist criterion for human visual acuity. At that point you can draw at any arbitrary resolution you like and scale it with no visible aliasing. If you want to game, great. You can set a minimum frame rate and the game engine will just set the resolution given the capabilities of the SoC. Text and vector art can be composited using another layer at the full screen resolution and will be perfect. All we need is higher resolution screens and more flexible scalers and we can finally achieve resolution independence, which would be an epic achievement.When the standard sampling rates for digital audio were chosen, they didn't pick 44.1 kHz by accident; they did so because the limit of human hearing was right around 22 kHz. They didn't arbitrarily go with a lower frequency because most people over 30 have an attenuated hearing range. If we want screens where the subpixel arrangement is undetectable, and can accurately reproduce an image with zero aliasing even for young children and individuals with exceptional vision, then we should make an effort to get to at least 2150 ppi. If screens can be produced at such high resolutions affordably and with power requirements comparable to any of the other available displays, there is no reason not to use them in shipping products.

It is a fallacy to state that the current resolution race is adversely affecting any other aspect of mobile device development. The display in the GS5 LTE-A is a perfect example: in addition to being the highest resolution display ever shipped in an Android device, it is also the most accurate, and there was no net adverse effect on battery life. The only metric in which we see this screen lag ever so slightly is max brightness, but it still manages to beat the screen in the regular GS5.

lilmoe - Wednesday, August 6, 2014 - link

You're missing my point entirely. SoCs WILL get faster and more efficient even without increasing screen resolution. You're point is that higher resolutions don't affect performance, which is completely inaccurate. Your example on audio is relatively non- relevant. Different sampling rates have nowhere near the impact on performance as do different resolutions.You're comparing the GS5 with the GS5 LTE-A. Let me remind you that these are completely different phones with different internals. They just happen to have around the same battery life because the latter happens to be more efficient in driving more pixels, which balances things out. If you want to check if the extra resolution does in fact affect performance and battery life, then you'll have to examine the same device with the same internals against different resolution screens.

You have come to the conclusion that the current performance and battery life of today's smartphones are adequate. They're not. A GS5 LTE-A with a 1080p panel will surely run smoother, cooler, and longer on battery than the current one with 1440p. Even if the difference isn't very significant, it's still a difference that the average consumer appreciates. With all do respect, but I believe your priorities should be reevaluated. Those who care about 2000ppi and no visible antialiasing are an absolute minority, especially on a small ~5" screen.

440PPI is by no means the same as the 90-150 ppi we've been "stuck" with for decades. It surely is adequate for today's (and tomorrows) applications. What we "need" now is a faster, cooler performing devices, with 3+ days of battery life and highly optimized software. That's what the industry should be focusing on.

hung2900 - Tuesday, August 5, 2014 - link

For Amoled screen, with more pixels, each pixel can reduce the luminance to get equal brightness performance, and with a specific brightness the total luminance is the same. That‘s why we see virtually no difference in power consuming. However, there are two basically improvements:- Lower luminance means better durability (which is important with Amoled display)

- Theoreotical max brightness is higher, means in the next generation when other power consumption advances are applied, we can see the major bump in brightness (same as S4 to S5)

NerdMan - Thursday, August 7, 2014 - link

Why does RGB arrangement matter? Other than for the OCD?Pentile is not noticeable at this resolution. That's why it's 600 DPI.

Pentile is necessary, because blue OLEDs get half the lifespan of green and red OLEDs. So they make them twice as big.

soccerballtux - Tuesday, August 5, 2014 - link

I love the placemats from target