IBM, NVIDIA and Wistron Develop New OpenPOWER HPC Server with POWER8 CPUs

by Anton Shilov on April 6, 2016 12:01 PM EST

IBM, NVIDIA and Wistron have introduced their second-generation server for high-performance computing (HPC) applications at the OpenPOWER Summit. The new machine is designed for IBM’s latest POWER8 microprocessors, NVIDIA’s upcoming Tesla P100 compute accelerators (featuring the company’s Pascal architecture) and the company’s NVLink interconnection technology. The new HPC platform will require software makers to port their apps to the new architectures, which is why IBM and NVIDIA plan to help with that.

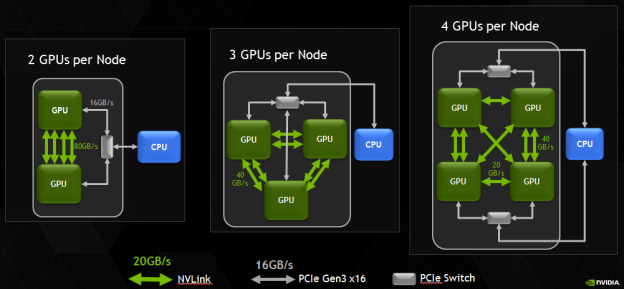

The new HPC platform developed by IBM, NVIDIA and Wistron (which is one of the major contract makers of servers) is based on several IBM POWER8 processors and several NVIDIA Tesla P100 accelerators. At present, the three companies do not reveal a lot of details about their new HPC platform, but it is safe to assume that it has two IBM POWER8 CPUs and four NVIDIA Tesla P100 accelerators. Assuming that every GP100 chip has four 20 GB/s NVLink interconnections, four GPUs is the maximum number of GPUs per CPU, which makes sense from bandwidth point of view. It is noteworthy that NVIDIA itself managed to install eight Tesla P100 into a 2P server (see the example of the NVIDIA DGX-1).

Correction 4/7/2016: Based on the images released by the OpenPOWER Foundation, the prototype server actually includes four, not eight NVIDIA Tesla P100 cards, as the original story suggested.

IBM’s POWER8 CPUs have 12 cores, each of which can handle eight hardware threads at the same time thanks to 16 execution pipelines. The 12-core POWER8 CPU can run at fairly high clock-rates of 3 – 3.5 GHz and integrate a total of 6 MB of L2 cache (512 KB per core) as well as 96 MB of L3 cache. Each POWER8 processor supports up to 1 TB of DDR3 or DDR4 memory with up to 230 GB/s sustained bandwidth (by comparison, Intel’s Xeon E5 v4 chips “only” support up to 76.8 GB/s of bandwidth with DDR4-2400). Since the POWER8 was designed both for high-end servers and supercomputers in mind, it also integrates a massive amount of PCIe controllers as well as multiple NVIDIA’s NVLinks to connect to special-purpose accelerators as well as the forthcoming Tesla compute processors.

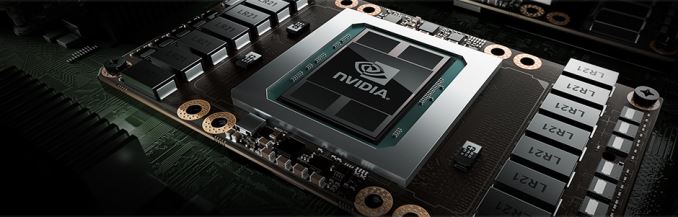

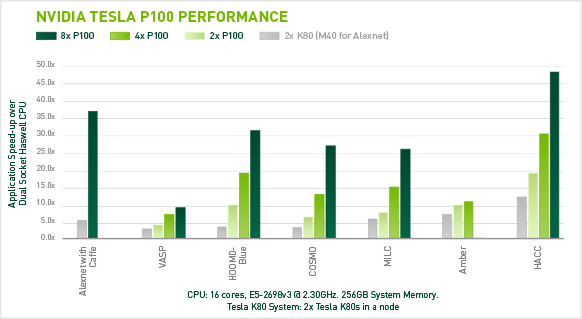

Each NVIDIA Tesla P100 compute accelerator features 3584 stream processors, 4 MB L2 cache and 16 GB of HBM2 memory connected to the GPU using 4096-bit bus. Single-precision performance of the Tesla P100 is around 10.6 TFLOPS, whereas its double precision is approximately 5.3 TFLOPS. A HPC node with eight such accelerators will have a peak 32-bit compute performance of 84.8 TFLOPS, whereas its 64-bit compute capability will be 42.4 TFLOPS. The prototype developed by IBM, NVIDIA and Wistron integrates four Tesla P100 modules, hence, its SP performance is 42.4 TFLOPS, whereas its DP performance is approximately 21.2 TFLOPS. Just for comparison: NEC’s Earth Simulator supercomputer, which was the world’s most powerful system from June 2002 to June 2004, had a performance of 35.86 TFLOPS running the Linpack benchmark. The Earth Simulator consumed 3200 kW of POWER, it consisted of 640 nodes with eight vector processors and 16 GB of memory at each node, for a total of 5120 CPUs and 10 TB of RAM. Thanks to Tesla P100, it is now possible to get performance of the Earth Simulator from just one 2U box (or two 2U boxes if the prototype server by Wistron is used).

IBM, NVIDIA and Wistron expect their early second-generation HPC platforms featuring IBM’s POWER8 processors to become available in Q4 2016. This does not mean that the machines will be deployed widely from the start. At present, the majority of HPC systems are based on Intel’s or AMD’s x86 processors. Developers of high-performance compute apps will have to learn how to better utilize IBM’s POWER8 CPUs, NVIDIA Pascal GPUs, the additional bandwidth provided by the NVLink technology as well as the Page Migration Engine tech (unified memory) developed by IBM and NVIDIA. The two companies intend to create a network of labs to help developers and ISVs port applications on the new HPC platforms. These labs will be very important not only to IBM and NVIDIA, but also to the future of high-performance systems in general. Heterogeneous supercomputers can offer very high performance, but in order to use that, new methods of programming are needed.

The development of the second-gen IBM POWER8-based HPC server is an important step towards building the Summit and the Sierra supercomputers for Oak Ridge National Laboratory and Lawrence Livermore National Laboratory. Both the Summit and the Sierra will be based on the POWER9 processors formally introduced last year as well as NVIDIA’s upcoming code-named Volta processors. The systems will also rely on NVIDIA’s second-generation NVLink interconnect.

Image Sources: NVIDIA, Micron.

Source: OpenPOWER Foundation

50 Comments

View All Comments

close - Thursday, April 7, 2016 - link

Your argument is that IBM must lie about POWER8 but Oracle wouldn't lie about Sparc? Is this because Oracle is known as an unbiased and independent source of information when it comes to Sparc?Brutalizer - Thursday, April 7, 2016 - link

No, that is not my argument. My argument is: check the benchmarks and convince yourself that four POWER8 cpus in two sockets, reaches 320GB/sec, just the same as TWO sparc m7 cpus. So the SPARC M7 bandwidth is twice as fast.And IBM tries to trick people believing that two sockets in the S822L server, corresponds to two POWER8 cpus, but actually, it has four POWER8 cpus. That is very ugly, because IBM tries to trick you to believe that the POWER8 is twice as good as it is. Read page two here, and see my comment on "320GB/sec for two socket POWER8". Very very ugly FUD from IBMers indeed.

Kevin G - Thursday, April 7, 2016 - link

There are two different POWER8 dies, one with 6 cores and another with 12 cores. The 6 core die has four memory channels where as the 12 core die has eight memory channels. Aggregate bandwidth is the same if you have dual chip model or a single chip module.IBM hasn't done so but it is feasible they could release the single die, 12 core part in the lower end S812L/S814/S822L/S824 systems.

close - Friday, April 8, 2016 - link

Who would have thought that a 32 core SPARC CPU will come out better than a 10 core POWER8 CPU, right? And mind you, a 32 core CPU comes out 2 times faster than a 10 core CPU.Good comparison boy.

JohnMirolha - Wednesday, April 6, 2016 - link

POWER8 starting rolling out the support for 2TB of Memory per socket, starting with High-End systems.Kevin G - Thursday, April 7, 2016 - link

The reason why sustained is close to theoretical peak is due to the Centuar memory buffer chip. The actual bandwidth from the DIMMs to the Centuar chip is actually 410 GB/s. The link between the POWER8 and eight Centaur chips is 230 GB. In addition, IBM places 16 MB L4 cache on the Centaur chips so there is a quick buffer there related to each memory channel individually. (The Centaur chips cannot cache any data that resides outside of its own memory channel.) Between the 16 MB of cache per memory channel and the higher raw DRAM bandwidth to the buffer than to the CPU, it isn't surprising that the two figures are relatively close.Oracle's link you cite doesn't list the full S824 hardware configuration. For memory bandwidth tests, the S824 can only obtain maximum memory bandwidth when all of its memory slots are occupied. This is due to the S824 using proprietary DIMMs that include the Centaur memory buffer. Thus it is easily possible to handicap overall memory bandwidth performance by using fewer but higher capacity DIMMs. In fact, Oracle could have conifgured the S824 with only 4 DIMMs to get the 512 GB capacity noted ( https://blogs.oracle.com/BestPerf/resource/stream/... ) and thus hinder performance.

jesperfrimann - Friday, April 8, 2016 - link

Well the Oracle benchmark is a joke. It's a competitor submission. There are quite a few Things that makes you raise an eyebrow. The tester have done quite a few different from what he/she would normally have done for an Oracle's own product submission.First the Firmware level of the machine is backlevel.. to put it mildly the level uses is 810_087, current is SV840_087, which makes it 13 versions behind current firmware level. Brrrr... On Oracle's own stream submissions, they normally MAX the memory.. which they haven't done here, they don't even put Down the memory configuration, they normally do that for their own submissions.

And last.. he/she configures the machine with 96 logical cores, but only uses 24. *COUGH* on Oracle's own submission he configures and runs threads over 1024 for the T5-8.

So.. well.. even though they try to stack the Cards, the S824 still puts up quite impressive numbers for such an old machine.

// Jesper

Brutalizer - Friday, April 8, 2016 - link

And here we go again. In think in ALL benchmarks I showed you, EVERY SINGLE ONE OF THEM, you have rejected them all because of this or that. And you did this years ago, and it is a bit worrying you have not changed a bit still today. I mean, dont you realize that as you expect everyone to accept your IBM benchmarks, but reject Oracle benchmarks - you seem like a bit biased fan boy? Some would say a VERY BIASED fanboy.Myself support SPARC, yes, but I am guided by benchmarks. I support the best technique. And when POWER7 came and was fastest, I congratulated the IBM benchmarks and agreed POWER7 was fastest. Today SPARC M7 is superior, and the best technique. POWER8 is lagging far behind, even slower than x86. Just look at the benchmarks.

Anyway, on that site with benchmarks I gave you, there are 25ish benchmarks where SPARC M7 is 2-3x faster than POWER8. I understand you reject all of them because of this or that. As you did earlier when we discussed SPARC T2+ vs POWER6, years back. I think it is fascinating that your conclusion is that in every single 25ish benchmark, POWER8 is faster than SPARC M7 because of this or that. I have never understood your logic; hard numbers say that SPARC M7 cpus are 5.5x faster than POWER8 cpus in OLTP workloads:

https://blogs.oracle.com/BestPerf/entry/20160317_s...

but still you vehemently claim that POWER8 is faster, because of this or that. Amazing.

I remember when you claimed that, when I showed you benchmarks where x86 beat POWER6 in linpack, you claimed that POWER6 was the faster cpu because one POWER6 core was faster. Amazing. How do you reason to a man displaying flawed logic like that? x86 scores higher in Linpack, but still POWER6 is faster in Linpack - because one POWER6 core is faster! Amazing.

And when I point out that because one single core is faster, does not make the entire cpu faster - you tried to dribbled away that too with talking about this or that. Bios patch level, RAM timing, etc etc etc. Your conclusion was; if you want the fastest Linpack performance, you get a POWER6, even though a Xeon scored higher. Amazing.

And now on this STREAM benchmark where SPARC M7 is 2x faster than POWER8, your conclusion is that POWER8 is faster than SPARC M7 because of... Firmware level? And Oracle didnt max the memory? That is why POWER8 somehow magically increased from 80 GB/sec up to and beyond 160 GB/sec. Because of Firmware level and no max of RAM. Great. (Question: do you really believe this, yourself? Doesnt it sound a bit... hollow and unconvincing? No?)

The Power8 STREAM numbers are from IBM themselves, as KevinG supplied in the link.

Lets look at the rest of the 25ish benchmarks where SPARC M7 is 2-3x faster, up to 11x faster than POWER8. How will you explain that POWER8 is faster than SPARC M7 in each of them? Different RAM? RAM latency? Power supply? Keyboard and mouse?

jesperfrimann - Friday, April 8, 2016 - link

What you don't understand is that in my professional life I don't really care if a server is Blue, Brown or Purple. I am not a fanboi. What I do care about is what gives my Company the biggest ROI.That is my job. And actually just a few months ago I finished a 'paper' in which I came up with the strategic solution stack for my company's Oracle Platform.

And it wasn't Power, it wasn't Windows, it wasn't AIX....I would have liked it to be M7 for technical reasons, but my 'Cold hard unbiased' analysis showed that the best platform for my Company was x86 box mover XXX using Intel Xeon's Ex-xxxx v4, with OVM ontop and Oracle Linux. The reason the M7 lost was mostly due to inhouse skills, cause It scored better than x86 in my analysis.

Currently I am looking on what platform to choose for an IBM solution stack, and to be honestly nobody comes even close to Linux on POWER but perhaps for AIX on POWER, but here my Companys skill base is more suited for Linux. So I am pretty convinced that the paper I am writing will be pointing towards Linux on POWER (with POWERVM not KVM) as the platform of choice for IBM software products.

I cannot limit myself to 'car magazine' IT as you do, or Vendor FUD.

Again the Stream submission stated :

Date result produced : Thu Oct 22 2015 Questions? : Gnanakumar.Rajaram@Oracle.com

That is hardly an IBM submission.

I would be just as critical towards an IBM Stream submission on a M7 based machine where you only Ran 1 thread per core. It would most likely have horrific numbers.

And .. well you are kind of beyond normal reason. I don't dislike the M7, I think it is a wonderful chip, unfortunately the business case for using it compared to Xeon's with OVM and Oracle Linux just isn't there for us.

// Jesper

Meteor2 - Friday, April 8, 2016 - link

We came to the same conclusion.