The AMD 2nd Gen Ryzen Deep Dive: The 2700X, 2700, 2600X, and 2600 Tested

by Ian Cutress on April 19, 2018 9:00 AM ESTTalking 12nm and Zen+

One of the highlights of the Ryzen 2000-series launch is that these processors use GlobalFoundries’ 12LP manufacturing process, compared to the 14LPP process used for the first generation of Ryzen processors. Both AMD and GlobalFoundries have discussed the differences in the processes, however it is worth understanding that each company has different goals: AMD only needs to promote what helps its products, whereas GlobalFoundries is a semiconductor foundry with many clients and might promote ideal-scenario numbers. Earlier this year we were invited to GlobalFoundries Fab 8 in upstate New York to visit the clean room, and had a chance to interview Dr. Gary Patton, the CTO.

The Future of Silicon: An Exclusive Interview with Dr. Gary Patton, CTO of GlobalFoundries

In that interview, several interesting items came to light. First, that the CTO doesn’t necessarily have to care much about what certain processes are called: their customers know the performance of a given process regardless of the advertised ‘nm’ number based on the development tools given to them. Second, that 12LP is a series of minor tweaks to 14LPP, relating to performance bumps and improvements that come from a partial optical shrink and a slight change in manufacturing rules in the middle-line and back-end of the manufacturing process. In the past this might not have been so news worthy, however GF’s customers want to take advantage of the improved process.

Overall, GlobalFoundries has stated that its 12LP process offers a 10% performance improvement and a 15% circuit density improvement over 14LPP.

This has been interpreted in many ways, such as an extra 10% frequency at the same power, or lower power for the same frequency, and an opportunity to build smaller chips.

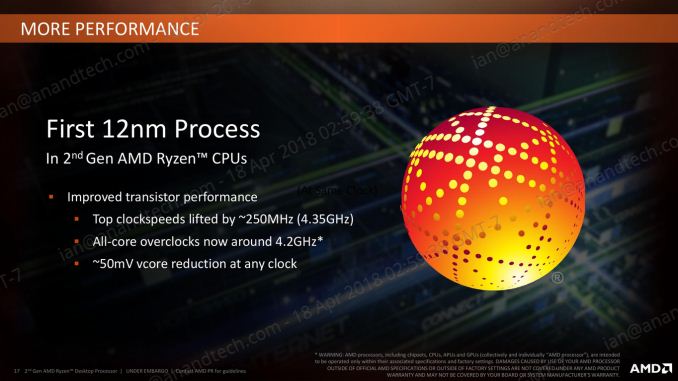

As part of today’s launch, AMD has clarified what the move to 12LP has meant for the Ryzen 2000-series:

- Top Clock Speeds lifted by ~250 MHz (~6%)

- All-core overclocks around 4.2 GHz

- ~50 mV core voltage reduction

AMD goes on to explain that at the same frequency, its new Ryzen 2000-series processors draw around 11% less power than the Ryzen 1000-series. The claims also state that this translates to +16% performance at the same power. These claims are a little muddled, as AMD has other new technologies in the 2000-series which will affect performance as well.

One interesting element is that although GF claims that there is a 15% density improvement, AMD is stating that these processors have the same die size and transistor count as the previous generation. Ultimately this seems in opposition to common sense – surely AMD would want to use smaller dies to get more chips per wafer?

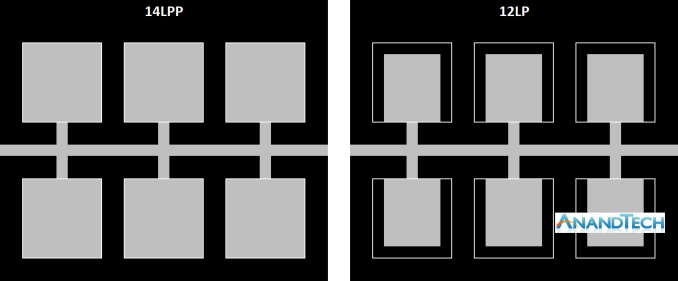

Ultimately, the new processors are almost carbon copies of the old ones, both in terms of design and microarchitecture. AMD is calling the design of the cores as ‘Zen+’ to differentiate them to the previous generation ‘Zen’ design, and it mostly comes down to how the microarchitecture features are laid out on the silicon. When discussing with AMD, the best way to explain it is that some of the design of the key features has not moved – they just take up less area, leaving more dark silicon between other features.

Here is a very crude representation of features attached to a data path. On the left is the 14LPP design, and each of the six features has a specific size and connects to the bus. Between each of the features is the dark silicon – unused silicon that is either seen as useless, or can be used as a thermal buffer between high-energy parts. On the right is the representation of the 12LP design – each of the features have been reduced in size, putting more dark silicon between themselves (the white boxes show the original size of the feature). In this context, the number of transistors is the same, and the die size is the same. But if anything in the design was thermally limited by the close proximity of two features, there is now more distance between them such that they should interfere with each other less.

For reference, AMD lists the die-size of these new parts as 213mm2, containing 4.8 billion transistors, identical to the first generation silicon design. AMD confirmed that they are using 9T transistor libraries, also the same as the previous generation, although GlobalFoundries offers a 7.5T design as well.

So is Zen+ a New Microarchitecture, or Process Node Change?

Ultimately, nothing about most of the Zen+ physical design layout is new. Aside from the manufacturing process node change and likely minor adjustments, the rest of the adjustments are in firmware and support:

- Cache latency adjustments leading to +3% IPC

- Increased DRAM Frequency Support to DDR4-2933

- Better voltage/frequency curves, leading to +10% performance overall

- Better Boost Performance with Precision Boost 2

- Better Thermal Response with XFR2

545 Comments

View All Comments

bsp2020 - Thursday, April 19, 2018 - link

Was AMD's recently announced Spectre mitigation used in the testing? I'm sorry if it was mentioned in the article. Too long and still in the process of reading.I'm a big fan of AMD but want to make sure the comparison is apples to apples. BTW, does anyone have link to performance impact analysis of AMD's Spectre mitigation?

fallaha56 - Thursday, April 19, 2018 - link

Yep, X470 is microcode parchedThis article as it stands is Intel Fanboi stuff

fallaha56 - Thursday, April 19, 2018 - link

As in the Toms articleSaturnusDK - Thursday, April 19, 2018 - link

Maybe he didn't notice that the tests are at stock speeds?DCide - Friday, April 20, 2018 - link

I can't find any other site using a BIOS as recent as the 0508 version you used (on the ASUS Crosshair VII Hero). Most sites are using older versions. These days, BIOS updates surrounding processor launches make significant performance differences. We've seen this with every Intel and AMD CPU launch since the original Ryzen.Shaheen Misra - Sunday, April 22, 2018 - link

Hi , im looking to gain some insight into your testing methods. Could you please explain why you test at such high graphics settings? Im sure you have previously stated the reasons but i am not familiar with them. My understanding has always been that this creates a graphics bottleneck?Targon - Monday, April 23, 2018 - link

When you consider that people want to see benchmark results how THEY would play the games or do work, it makes sense to focus on that sort of thing. Who plays at a 720p resolution? Yes, it may show CPU performance, or eliminate the GPU being the limiting factor, but if you have a Geforce 1080 GTX, 1080p, 1440, and then 4k performance is what people will actually game at.The ability to actually run video cards at or near their ability is also important, which can be a platform issue. If you see every CPU showing the same numbers with the same video card, then yea, it makes sense to go for the lower settings/resolutions, but since there ARE differences between the processors, running these tests the way they are makes more sense from a "these are similar to what people will see in the real world" perspective.

FlashYoshi - Thursday, April 19, 2018 - link

Intel CPUs were tested with Meltdown/Spectre patches, that's probably the discrepancy you're seeing.MuhOo - Thursday, April 19, 2018 - link

Computerbase and pcgameshardware also used the patched... every other site has completely different results from anandtechsor - Thursday, April 19, 2018 - link

Fwiw I took five minutes to see what you guys are talking about. To me it looks like Toms is screwed up. If you look at the time graphs it looks to me like it’s the purple line on top most of the time, but the summaries have that CPU in 3rd or 4th place. E.G. https://img.purch.com/r/711x457/aHR0cDovL21lZGlhLm...At any rate things are generally damn close, and they largely aren’t even benchmarking the same games, so I don’t understand why a few people are complaining.