The Intel Optane Memory H10 Review: QLC and Optane In One SSD

by Billy Tallis on April 22, 2019 11:50 AM ESTAnandTech Storage Bench - The Destroyer

The Destroyer is an extremely long test replicating the access patterns of very IO-intensive desktop usage. A detailed breakdown can be found in this article. Like real-world usage, the drives do get the occasional break that allows for some background garbage collection and flushing caches, but those idle times are limited to 25ms so that it doesn't take all week to run the test. These AnandTech Storage Bench (ATSB) tests do not involve running the actual applications that generated the workloads, so the scores are relatively insensitive to changes in CPU performance and RAM from a new testbed, but the jump to a newer version of Windows and newer storage drivers can have an impact.

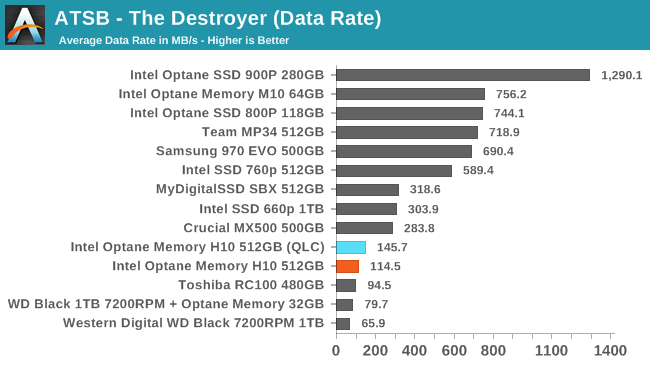

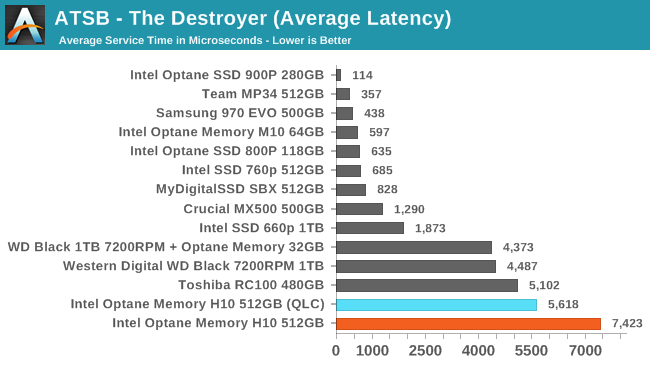

We quantify performance on this test by reporting the drive's average data throughput, the average latency of the I/O operations, and the total energy used by the drive over the course of the test.

The Intel Optane Memory H10 actually performs better overall on The Destroyer with caching disabled and the Optane side of the drive completely inactive. This test doesn't leave much time for background optimization of data placement, and the total amount of data moved is vastly larger than what fits into a 32GB Optane cache. The 512GB of QLC NAND doesn't have any performance to spare for cache thrashing.

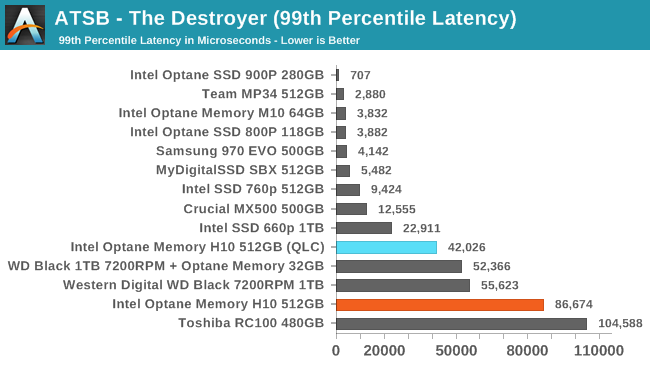

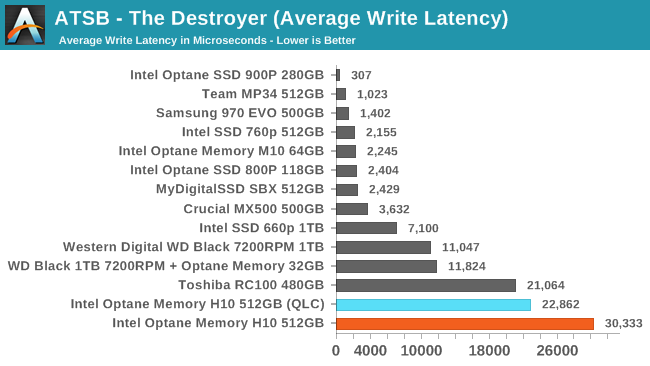

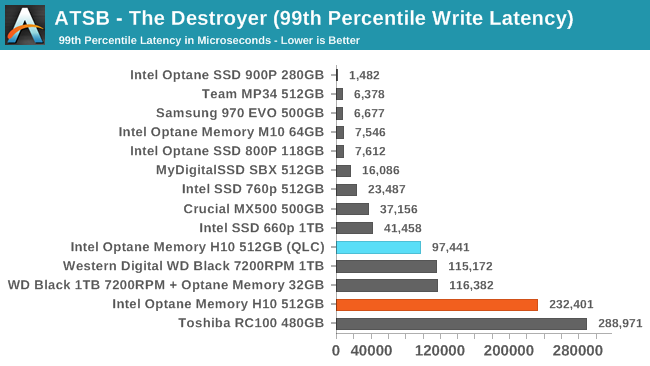

The QLC side of the Optane Memory H10 has poor average and 99th percentile latency scores on its own, and throwing in an undersized cache only makes it worse. Even the 7200RPM hard drive scores better.

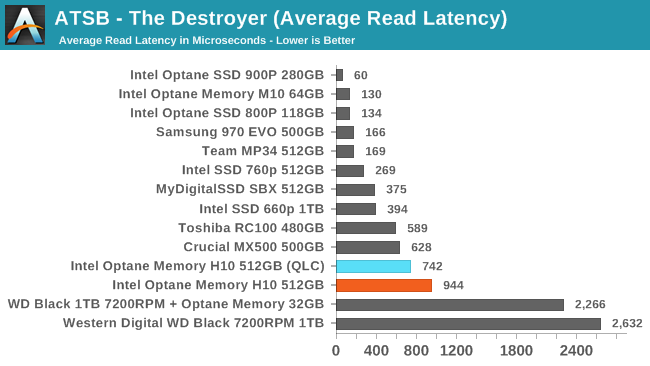

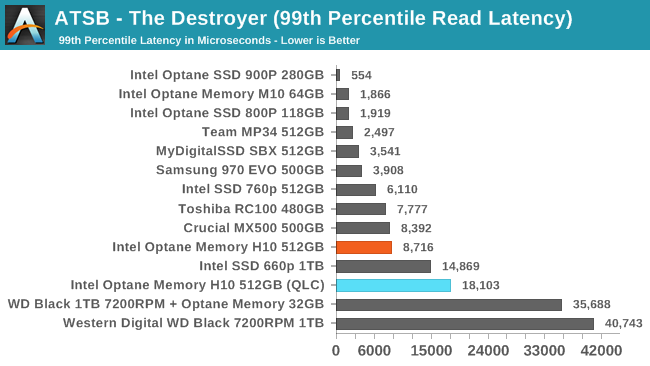

The average read latencies for the Optane Memory H10 are worse than all the TLC-based drives, but much better than the hard drive with or without an Optane cache in front of it. For writes, the H10's QLC drags it into last place once the SLC cache runs out.

The Optane cache does help the H10's 99th percentile read latency, bringing it up to par with the Crucial MX500 SATA SSD and well ahead of the larger QLC-only 1TB 660p. The 99th percentile write latency is horrible, but even with the cache thrashing causing excess writes, the H10 isn't quite as badly off as the DRAMless Toshiba RC100.

60 Comments

View All Comments

Valantar - Tuesday, April 23, 2019 - link

"Why hamper it with a slower bus?": cost. This is a low-end product, not a high-end one. The 970 EVO can at best be called "midrange" (though it keeps up with the high end for performance in a lot of cases). Intel doesn't yet have a monolithic controller that can work with both NAND and Optane, so this is (as the review clearly states) two devices on one PCB. The use case is making a cheap but fast OEM drive, where caching to the Optane part _can_ result in noticeable performance increases for everyday consumer workloads, but is unlikely to matter in any kind of stress test. The problem is that adding Optane drives up prices, meaning that this doesn't compete against QLC drives (which it would beat in terms of user experience) but also TLC drives which would likely be faster in all but the most cache-friendly, bursty workloads.I see this kind of concept as the "killer app" for Optane outside of datacenters and high-end workstations, but this implementation is nonsense due to the lack of a suitable controller. If the drive had a single controller with an x4 interface, replaced the DRAM buffer with a sizeable Optane cache, and came in QLC-like capacities, it would be _amazing_. Great capacity, great low-QD speeds (for anything cached), great price. As it stands, it's ... meh.

cb88 - Friday, May 17, 2019 - link

Therein lies the BS... Optane cannot compete as a low end product as it is too expensive.. so they should have settled for being the best premium product with 4x PCIe... probably even maxing out PCIe 4.0 easily once it launches.CheapSushi - Wednesday, April 24, 2019 - link

I think you're mixing up why it would be faster. The lanes are the easier part. It's inherently faster. But you can't magically make x2 PCIe lanes push more bandwidth than x4 PCIe lanes on the same standard (3.0 for example).twotwotwo - Monday, April 22, 2019 - link

Prices not announced, so they can still make it cheaper.Seems like a tricky situation unless it's priced way below anything that performs similarly though. Faster options on one side and really cheap drives that are plenty for mainstream use on the other.

CaedenV - Monday, April 22, 2019 - link

lol cheaper? All of the parts of a traditional SSD, *plus* all of the added R&D, parts, and software for the Optane half of the drive?I will be impressed if this is only 2x the price of a Sammy... and still slower.

DanNeely - Monday, April 22, 2019 - link

Ultimately, to scale this I think Intel is going to have to add an on card PCIe switch. With the company currently dominating the market setting prices to fleece enterprise customers, I suspect that means they'll need to design something in house. PCIe4 will help some, but normal drives will get faster too.kpb321 - Monday, April 22, 2019 - link

I don't think that would end up working out well. As the article mentions PCI-E switches tend to be power hungry which wouldn't work well and would add yet another part to the drive and push the BOM up even higher. For this to work you'd need to deliver TLC level performance or better but at a lower cost. Ultimately the only way I can see that working would be moving to a single integrated controller. From a cost perspective eliminating the DRAM buffer by using a combination of the Optane memory and HBM should probably work. This would probably push it into a largely or completely hardware managed solution and would improve compatibility and eliminate the issues with the PCI-E bifrication and bottlenecks.ksec - Monday, April 22, 2019 - link

Yes, I think we will need a Single Controller to see its true potential and if it has a market fit.Cause right now I am not seeing any real benefits or advantage of using this compared to decent M.2 SSD.

Kevin G - Monday, April 22, 2019 - link

What Intel needs to do for this to really take off is to have a combo NAND + Optane controller capable of handling both types natively. This would eliminate the need for a PCIe switch and free up board space on the small M.2 sticks. A win-win scenario if Intel puts forward the development investment.e1jones - Monday, April 22, 2019 - link

A solution for something in search of a problem. And, typical Intel, clearly incompatible with a lot of modern systems, much less older systems. Why do they keep trying to limit the usability of Optane!?In a world where each half was actually accessible, it might be useful for ZFS/NAS apps, where the Optane could be the log or cache and the QLC could be a WORM storage tier.