Seeing Is Believing: Intel Teases DG1 Discrete Xe GPU With Laptop & Desktop Dev Cards At CES 2020

by Ryan Smith on January 9, 2020 12:00 PM EST

While CES 2020 technically doesn’t wrap up for another couple of days, I’ve already been asked a good dozen or so times what the neatest or most surprising product at the show has been for me. And this year, it looks like that honor is going to Intel. Much to my own surprise, the company has their first Xe discrete client GPU, DG1, at the show. And while the company is being incredibly coy on any actual details for the hardware, it is none the less up and running, in both laptop and desktop form factors.

Intel first showed off DG1 as part of its hour-long keynote on Monday, when the company all too briefly showed a live demo of it running a game. More surprising still, the behind-the-stage demo unit wasn’t even a desktop PC (as is almost always the case), but rather it was a notebook, with Intel making this choice to underscore both the low power consumption of DG1, as well as demonstrating just how far along the GPU is since the first silicon came back to the labs in October.

To be sure, the company still has a long way to go between here and when DG1 will actually be ready to ship in retail products; along with the inevitable hardware bugs, Intel has a pretty intensive driver and software stack bring-up to undertake. So DG1’s presence at CES is very much a teaser of things to come rather than a formal hardware announcement, for all the pros and the cons that entails.

At any rate, Intel’s CES DG1 activities aren’t just around their keynote laptop demo. The company also has traditional desktop cards up and running, and they gave the press a chance to see it yesterday afternoon.

Dubbed the “DG1 Software Development Vehicle”, the desktop DG1 card is being produced by Intel in small quantities so that they can sample their first Xe discrete GPU to software vendors well ahead of any retail launch. ISVs regularly get cards in advance so that they can begin development and testing against a new GPU architecture, so Intel doing the same here isn’t too unusual. However it’s almost unheard of for these early sample cards to be revealed to the public, which goes to show just how different of a tack Intel is taking in the launch of its first modern dGPU.

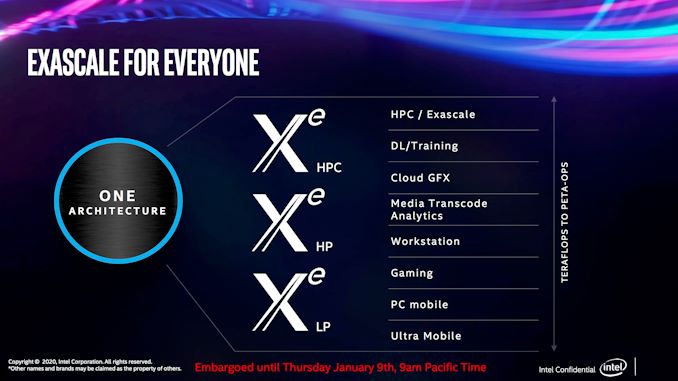

Unfortunately there’s not much I can tell you about the DG1 GPU or the card itself. The purpose of this unveil is to show that DG1 is here and it works; and little else. So Intel isn’t disclosing anything about the architecture, the chip, power requirements, performance, launch date, etc. All I know for sure on the GPU side of matters is that DG1 is based on what Intel is calling their Xe-LP (low power) microarchitecture, which is the same microarchitecture that is being used for Tiger Lake’s Xe-based iGPU. This is distinct from Xe-HP and Xe-HPC, which according to Intel are separate (but apparently similar) microarchitectures that are more optimized for workstations, servers, and the like.

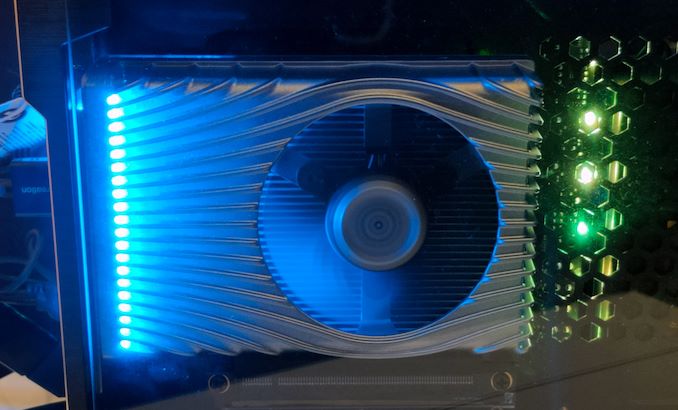

As for the dev card, I can tell you that it is entirely PCIe slot powered – so it’s under 75W – and that it uses an open-air cooler design with a modest heatsink and an axial fan slightly off-center. The card also features 4 DisplayPort outputs, though as this is a development card, this doesn’t mean anything in particular for retail cards. Otherwise, Intel has gone with a rather flashy design for a dev card – since Intel is getting in front of any dev leaks by announcing it to the public now, I suppose there’s no reason to try to conceal the card with an unflattering design, which instead Intel can use it as another marketing opportunity.

Intel has been shipping the DG1 dev cards to ISVs as part of a larger Coffee Lake-S based kit. The kit is unremarkable – or at least, Intel wouldn’t remark on it – but like the DG1 dev card, it’s definitely designed to show off the video card inside, with Intel using a case that mounts it parallel to the motherboard so that the card is readily visible.

It was also this system that Intel used to show off that, yes, the DG1 dev card works as well. In this case it was running Warframe at 1080p, though Intel was not disclosing FPS numbers or what settings were used. Going by the naked eye alone, it’s clear that the card was struggling at times to maintain a smooth framerate, but as this is early hardware on early software, it’s clearly not in a state where Intel is even focusing on performance.

Overall, Intel has been embarking on an extended, trickle-feed media campaign for the Xe GPU family, and this week’s DG1 public showcase is the latest in that Odyssey campaign. So expect to see Intel continuing these efforts over the coming months, as Intel gradually prepares Tiger Lake and DG1 for their respective launches.

83 Comments

View All Comments

Zizo007 - Thursday, January 9, 2020 - link

Its good that Intel is in the GPU business now. Competition will bring down Nvidia's outrageous prices.But Intel GPUs might have many bugs at launch as its their first dGPU ever.

Hurn - Thursday, January 9, 2020 - link

Not their first dGPU.The I740 was available both on an AGP card and soldered onto Intel motherboards.

In 1998-199.

Retycint - Thursday, January 9, 2020 - link

Nvidia prices are hardly outrageous, given that Nvidia has actually been improving gen after gen in terms of power/efficiency, unlike its CPU counterpart Intel. Things like RTX may be an expensive gimmick now, but it pushes the envelope for the gaming industry and is only going to be a good thing in the long runSpunjji - Friday, January 10, 2020 - link

They increased the price of their high-end GPU by 70% in a generation where performance increased by ~30%. I'd definitely call that outrageous.RTX isn't worth that extra 40% in a first-gen product with so little software support lined up.

FANBOI2000 - Friday, January 10, 2020 - link

And AMD weren't any cheaper, I know that was down to mining but I think it Al adds up to new normal now. We paid up so they don't have a great incentive to keep prices low. If NVIDIA have the fastest cards then people will pay. I don't like it, I look at £250 cards and think they should be closer to 150, but even AMD seem in on the game now. They could easily clean up with a 30 drop on some of the cards but chose not to. You'd think they might of given the success of the Ryzen on desktop. But conversely there Intel has dropped prices so AMD CPU don't seem quite the bargains they were. It seem the opposite with the graphics with AMD increasing its prices to come closer to NVIDIA. That suggests to me that if AMD had the cards to really beat NVIDIA they would be charging high for them just like Nvidea. So the new normal.sarafino - Thursday, January 9, 2020 - link

Nvidia pricing won't budge unless Intel manages to come out with something that can actually compete in the high-end. It's going to take quite a while just for them to get their graphics cards and drivers performing at that kind of level.Yojimbo - Friday, January 10, 2020 - link

NVIDIA and AMD both offer a range of cards at a range of prices. I mean, AMD's products currently compete with NVIDIA's all the way up to the $450. NVIDIA is better at building the GPUs so they can make more money selling a card at that price. You don't want the same performance card to be sold for the same price with less profit, because then the company will have less opportunity and incentive to invest in future performance gains. The real bad situation for consumers would be if innovation and performance tails off and then not only will you be paying more money per gain in experience, but you'd be getting less of a gain in experience overall.Buy what you can afford. If prices do actually go up then it sets you back a few months in terms of price/performance ratio, and they only go up to what people are willing to pay. Competition should bring down prices somewhat but there's nothing outrageous about the prices unless you think it's outrageous what other people are willing to pay. If no one was willing to pay 600 dollars for a card then NVIDIA wouldn't make a card for that and developers wouldn't bother putting features in their games that require a video card that costs that much.

Spunjji - Friday, January 10, 2020 - link

People were willing to pay $600 - a small minority of them, but still. $350 is where most of the market is at.$1199 is an objectively outrageous price for a high-end consumer graphics card, and it is outrageous that people are willing to pay that.

As a result, most developers haven't yet bothered putting RTX-exclusive features in their games. The most popular card by far is the 2060, which can't really *do* RTX in any meaningful sense.

Yojimbo - Friday, January 10, 2020 - link

Your opinion is tat it is outrageous. However, it is their money. It's not that developers "haven't bothered" to put RT features in their games, it's that it takes time and money since it's a new technology. But what in the world does it have to do with the discussion, expect possibly to form an argument against the idea that NVIDIA is charging "outrageous prices". Raytracing is an innovation. Because of NVIDIA's push, you will eventually have raytracing at least 2 years earlier than otherwise. That's more valuable than 6 months of price/performance lag. 2060 can do RTX. There's nothing that says you need to run your games at 80 FPS. 40 FPS is just fine. But, it's the choice of the individual purchaser.Korguz - Friday, January 10, 2020 - link

" There's nothing that says you need to run your games at 80 FPS. 40 FPS is just fine. But, it's the choice of the individual purchaser. " tell that to those that keep saying for games.. the intel cpus are the ones to get because of the higher clocks. and how they want 100+ fps. To say 40 fps is fine to those, they would laugh at you.. ray tracing on current rtx hardware isnt fast enough to use unless you go high end 2070 or 2080 cause the performnace hit is too much for those that want a minimum of 80 fps.. and more then 100+ most of the time. and those 2070s and 2080s are priced way to high, i think the 2070s start at around $800 cdn here.. way to expensive