The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTPower: P-Core vs E-Core, Win10 vs Win11

For Alder Lake, Intel brings two new things into the mix when we start talking about power.

First is what we’ve already talked about, the new P-core and E-core, each with different levels of performance per watt and targeted at different sorts of workloads. While the P-cores are expected to mimic previous generations of Intel processors, the E-cores should offer an interesting look into how low power operation might work on these systems and in future mobile systems.

The second element is how Intel is describing power. Rather than simply quote a ‘TDP’, or Thermal Design Power, Intel has decided (with much rejoicing) to start putting two numbers next to each processor, one for the base processor power and one for maximum turbo processor power, which we’ll call Base and Turbo. The idea is that the Base power mimics the TDP value we had before – it’s the power at which the all-core base frequency is guaranteed to. The Turbo power indicates the highest power level that should be observed in normal power virus (usually defined as something causing 90-95% of the CPU to continually switch) situation. There is usually a weighted time factor that limits how long a processor can remain in its Turbo state for slowly reeling back, but for the K processors Intel has made that time factor effectively infinite – with the right cooling, these processors should be able to use their Turbo power all day, all week, and all year.

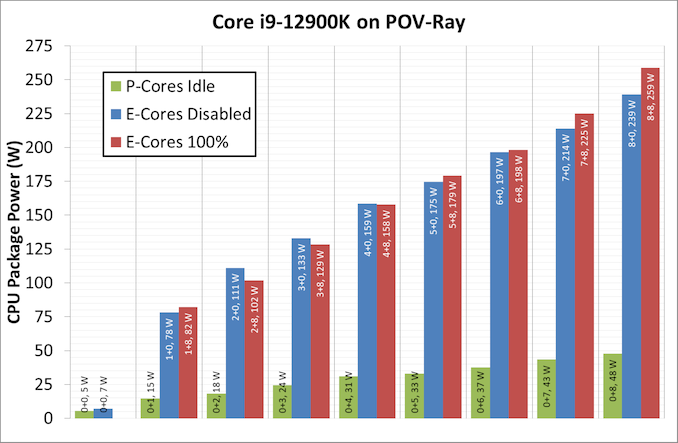

So with that in mind, let’s start simply looking at the individual P-cores and E-cores.

Listed in red, in this test, all 8P+8E cores fully loaded (on DDR5), we get a CPU package power of 259 W. The progression from idle to load is steady, although there is a big jump from idle to single core. When one core is loaded, we go from 7 W to 78 W, which is a big 71 W jump. Because this is package power (the output for core power had some issues), this does include firing up the ring, the L3 cache, and the DRAM controller, but even if that makes 20% of the difference, we’re still looking at ~55-60 W enabled for a single core. By comparison, for our single thread SPEC power testing on Linux, we see a more modest 25-30W per core, which we put down to POV-Ray’s instruction density.

By contrast, in green, the E-cores only jump from 5 W to 15 W when a single core is active, and that is the same number as we see on SPEC power testing. Using all the E-cores, at 3.9 GHz, brings the package power up to 48 W total.

It is worth noting that there are differences between the blue bars (P-cores only) and the red bars (all cores, with E-cores loaded all the time), and that sometimes the blue bar consumes more power than the red bar. Our blue bar tests were done with E-cores disabled in the BIOS, which means that there might be more leeway in balancing a workload across a smaller number of cores, allowing for higher power. However as everything ramps up, the advantage swings the other way it seems. It’s a bit odd to see this behavior.

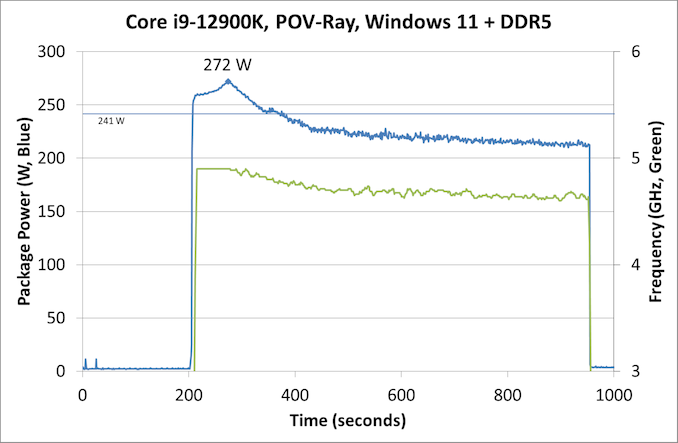

Moving on to individual testing, and here’s a look at a power trace of POV-Ray in Windows 11:

Here we’re seeing a higher spike in power, up to 272 W now, with the system at 4.9 GHz all-core. Interestingly enough, we see a decrease of power through the 241 W Turbo Power limit, and it settles around 225 W, with the reported frequency actually dropping to between 4.7-4.8 GHz instead. Technically this all-core is meant to take into account some of the E-cores, so this might be a case of the workload distributing itself and finding the best performance/power point when it comes to instruction mix, cache mix, and IO requirements. However, it takes a good 3-5 minutes to get there, if that’s the case.

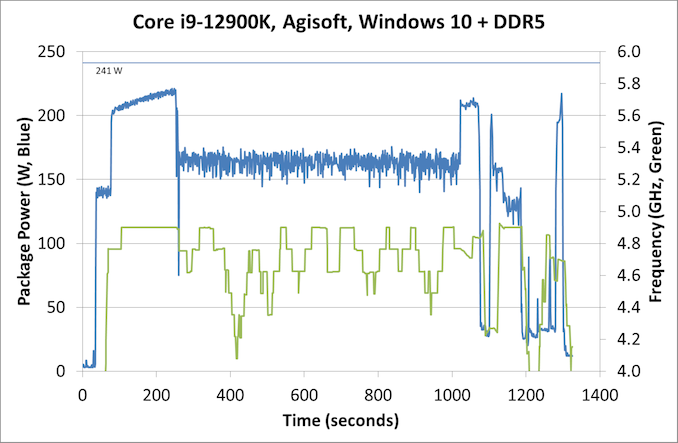

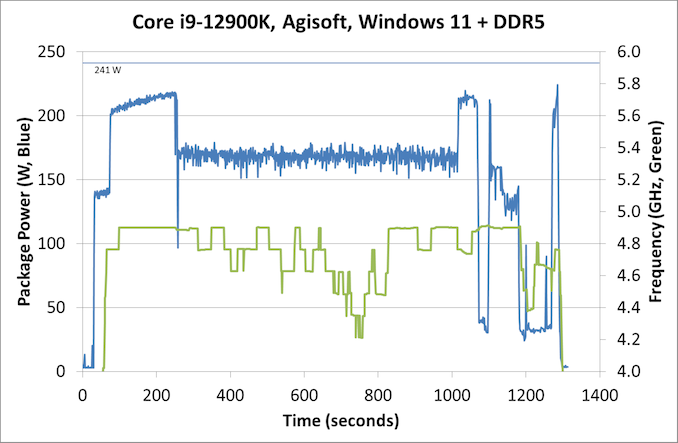

Intrigued by this, I looked at how some of our other tests did between different operating systems. Enter Agisoft:

Between Windows 10 and Windows 11, the traces look near identical. The actual run time was 5 seconds faster on Windows 11 out of 20 minutes, so 0.4% faster, which we would consider run-to-run variation. The peaks and spikes look barely higher in Windows 11, and the frequency trace in Windows 11 looks a little more consistent, but overall they’re practically the same.

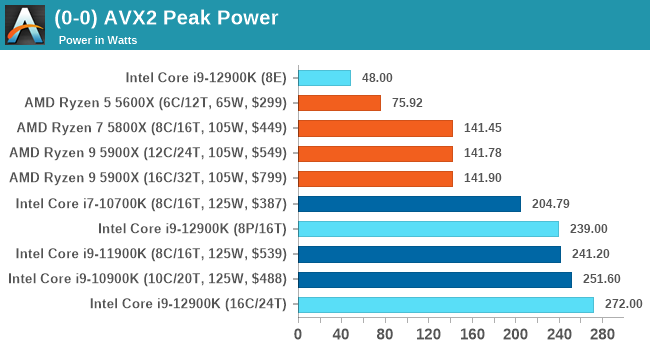

For our usual power graphs, we get something like this, and we’ll also add in the AVX-512 numbers from that page:

Compared to Intel’s previous 11th Generation Processor, the Alder Lake Core i9 uses more power during AVX2, but is actually lower in AVX-512. The difficulty of presenting this graph in the future is based on those E-cores; they're more efficient, and as you’ll see in the results later. Even on AVX-512, Alder Lake pulls out a performance lead using 50 W fewer than 11th Gen.

When we compare it to AMD however, with that 142 W PPT limit that AMD has, Intel is often trailing at a 20-70 W deficit when we’re looking at full load efficiency. That being said, Intel is likely going to argue that in mixed workloads, such as two software programs running where something is on the E-cores, it wants to be the more efficient design.

474 Comments

View All Comments

Maxiking - Thursday, November 4, 2021 - link

Let's be real, no one expected anything else, the first time Intel can use a different node that still has its problems and AMD is embarrassed and slower again.Lol, once Intel starts making their GPUs using TSMC, AMD back to being slowest there too.

LOL what a pathetic company

factual - Thursday, November 4, 2021 - link

Why disparage AMD !? the harder these companies compete, the better for consumers! Stop being a mindless fanboi of any company and start thinking rationally and become a fan of your own pocketbook !Fulljack - Thursday, November 4, 2021 - link

so, get this, you're fine with Intel stuck giving you max 4c/8t for nearly 6 years? really?Maxiking - Thursday, November 4, 2021 - link

You must be from the certain sub called AMD.I bought a 6 six for 390 USD in 2014, a 5820k.

Cry more. LOL

Maxiking - Thursday, November 4, 2021 - link

*a 6 coreTheinsanegamerN - Thursday, November 4, 2021 - link

Don't forget their 6 core 980x in 2009."But but but it wasn't a CONSUMER (read: cheap enough) CPU!!!!!!"

- THE AMDrones who forget that AMD sold a 6 core phenom that lost game benchmarks to a dual core i3 and then spent the next 5 years selling fake octo core chips.

Spunjji - Friday, November 5, 2021 - link

"But but but it wasn't a CONSUMER (read: cheap enough) CPU!!!!!!"Correct, there's a difference between a CPU that needs a consumer-level dual-channel memory platform and a workstation-grade triple-channel (or greater) platform. It doesn't sound so absurd if you don't strawman it with emotive nonsense and/or pretending that Used prices are the same as New.

I don't think anybody's forgotten how much better Intel's CPUs were from Core 2 all the way through to Skylake. The fact remains that when AMD returned to competition, they did so by opening up entirely new performance categories in desktop and notebook chips that Intel had previously restricted to HEDT hardware. I don't really understand why Intel fanbots like Maxiking feel so pressed about it, but they do, and it's kind of amusing to see them externalise it.

Qasar - Friday, November 5, 2021 - link

too bad that was hedt, not mainstream, thats where your beloved intel stagnated the cpu market, maxipadking, maybe you should cry more. intels non hedt lineup was so confusing i gave up trying to decide which to get, so i just grabbed a 5830k and an asus x99 deluxe, and was done woth it.lmcd - Friday, November 5, 2021 - link

The current street prices of $600+ for high-end desktop CPUs are comparable to HEDT prices. Let's face it, the HEDT market is underserved right now as a cost-saving measure (make them spend more for bandwidth) and not because the segmentation was bad.In sum, my opinion is that ignoring the HEDT line doesn't make much sense because segmenting the market was a good thing for consumers. Most people didn't end up needing the advantages that the platform provided before that era got excised with Windows 11 (unrelated rant not included). That provided a cost savings.

Qasar - Friday, November 5, 2021 - link

" The current street prices of $600+ for high-end desktop CPUs are comparable to HEDT prices " i didnt say current, as you saw, i mentioned X99, which was what 2014, that came out ?" Let's face it, the HEDT market is undeserved right now as a cost-saving measure " more like intel cant compete in that space due to ( possibly ) not being able to make a chip that big with acceptable yields so they just havent bothered. maybe that is also why their desktop cpus maxed out at 8 cores before gen 12, as they couldn't make anything bigger.

" doesn't make much sense because segmenting the market was a good thing for consumers " oh but it is bad, intel has pretty much always had way to many cpus for sale, i spent i think 2 days trying to decide which cpu to get back then, and it gave me a head ache. i would of been fine with z97 i think it was with the I/O and pce lanes, but trying to figure out which cpu, is where the headache came from, the prices were so close between each that i gave up, spent a little more, and picked up x99 and a 5930k( i said 5830k above, but meant 5930k) and that system lasted me until early last year.