The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTFundamental Windows 10 Issues: Priority and Focus

In a normal scenario the expected running of software on a computer is that all cores are equal, such that any thread can go anywhere and expect the same performance. As we’ve already discussed, the new Alder Lake design of performance cores and efficiency cores means that not everything is equal, and the system has to know where to put what workload for maximum effect.

To this end, Intel created Thread Director, which acts as the ultimate information depot for what is happening on the CPU. It knows what threads are where, what each of the cores can do, how compute heavy or memory heavy each thread is, and where all the thermal hot spots and voltages mix in. With that information, it sends data to the operating system about how the threads are operating, with suggestions of actions to perform, or which threads can be promoted/demoted in the event of something new coming in. The operating system scheduler is then the ring master, combining the Thread Director information with the information it has about the user – what software is in the foreground, what threads are tagged as low priority, and then it’s the operating system that actually orchestrates the whole process.

Intel has said that Windows 11 does all of this. The only thing Windows 10 doesn’t have is insight into the efficiency of the cores on the CPU. It assumes the efficiency is equal, but the performance differs – so instead of ‘performance vs efficiency’ cores, Windows 10 sees it more as ‘high performance vs low performance’. Intel says the net result of this will be seen only in run-to-run variation: there’s more of a chance of a thread spending some time on the low performance cores before being moved to high performance, and so anyone benchmarking multiple runs will see more variation on Windows 10 than Windows 11. But ultimately, the peak performance should be identical.

However, there are a couple of flaws.

At Intel’s Innovation event last week, we learned that the operating system will de-emphasise any workload that is not in user focus. For an office workload, or a mobile workload, this makes sense – if you’re in Excel, for example, you want Excel to be on the performance cores and those 60 chrome tabs you have open are all considered background tasks for the efficiency cores. The same with email, Netflix, or video games – what you are using there and then matters most, and everything else doesn’t really need the CPU.

However, this breaks down when it comes to more professional workflows. Intel gave an example of a content creator, exporting a video, and while that was processing going to edit some images. This puts the video export on the efficiency cores, while the image editor gets the performance cores. In my experience, the limiting factor in that scenario is the video export, not the image editor – what should take a unit of time on the P-cores now suddenly takes 2-3x on the E-cores while I’m doing something else. This extends to anyone who multi-tasks during a heavy workload, such as programmers waiting for the latest compile. Under this philosophy, the user would have to keep the important window in focus at all times. Beyond this, any software that spawns heavy compute threads in the background, without the potential for focus, would also be placed on the E-cores.

Personally, I think this is a crazy way to do things, especially on a desktop. Intel tells me there are three ways to stop this behaviour:

- Running dual monitors stops it

- Changing Windows Power Plan from Balanced to High Performance stops it

- There’s an option in the BIOS that, when enabled, means the Scroll Lock can be used to disable/park the E-cores, meaning nothing will be scheduled on them when the Scroll Lock is active.

(For those that are interested in Alder Lake confusing some DRM packages like Denuvo, #3 can also be used in that instance to play older games.)

For users that only have one window open at a time, or aren’t relying on any serious all-core time-critical workload, it won’t really affect them. But for anyone else, it’s a bit of a problem. But the problems don’t stop there, at least for Windows 10.

Knowing my luck by the time this review goes out it might be fixed, but:

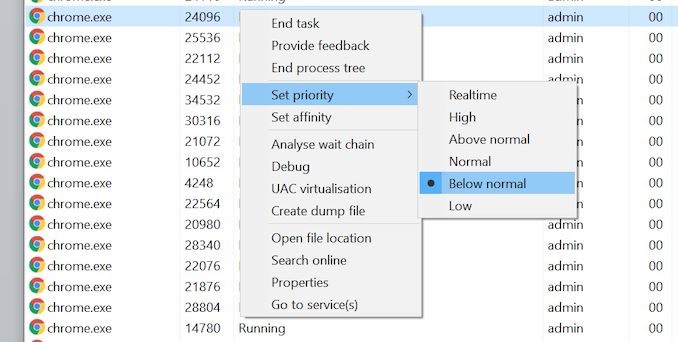

Windows 10 also uses the threads in-OS priority as a guide for core scheduling. For any users that have played around with the task manager, there is an option to give a program a priority: Realtime, High, Above Normal, Normal, Below Normal, or Idle. The default is Normal. Behind the scenes this is actually a number from 0 to 31, where Normal is 8.

Some software will naturally give itself a lower priority, usually a 7 (below normal), as an indication to the operating system of either ‘I’m not important’ or ‘I’m a heavy workload and I want the user to still have a responsive system’. This second reason is an issue on Windows 10, as with Alder Lake it will schedule the workload on the E-cores. So even if it is a heavy workload, moving to the E-cores will slow it down, compared to simply being across all cores but at a lower priority. This is regardless of whether the program is in focus or not.

Of the normal benchmarks we run, this issue flared up mainly with the rendering tasks like CineBench, Corona, POV-Ray, but also happened with yCruncher and Keyshot (a visualization tool). In speaking to others, it appears that sometimes Chrome has a similar issue. The only way to fix these programs was to go into task manager and either (a) change the thread priority to Normal or higher, or (b) change the thread affinity to only P-cores. Software such as Project Lasso can be used to make sure that every time these programs are loaded, the priority is bumped up to normal.

474 Comments

View All Comments

Wrs - Friday, November 5, 2021 - link

Not sure if you know what TCO is. It includes electricity and some $ allotment for software support of the hardware. Most end users don't directly deal with the latter (how many $ is a compatibility headache?) and don't own a watt-meter to calculate the former.That said, what's wrong with reusing DDR4, adapting an AM4 cooler with an LGA 1700 bracket, and reusing the typically oversized PSU? AT shows DDR4 lagging, but most DDR4 out there is way faster than 3200 CL20. That's why other review sites say DDR4 wins most benches. No reasonable user buys a 12900k or 12600k to pair with JEDEC RAM timings!

Really the only cost differentiator is the CPU + mobo. ADL and Zen3 are on very similar process nodes. One is not markedly more efficient than the other unless the throttle is pushed very differently, or in edge cases where their architectural differences matter.

isthisavailable - Friday, November 5, 2021 - link

Wake me up when intel stop K series nonsense and their motherboards stop costing twice as much as AMD only to be useless when next gen chips arrive.mode_13h - Friday, November 5, 2021 - link

Intel always gives you 2 CPU generations on any new socket. The only exception to that we've seen was Haswell, due to their cancellation of desktop Broadwell.mode_13h - Friday, November 5, 2021 - link

And besides, they had to change the socket for PCIe 5.0 and DDR5, if not also other reasons.This wasn't like the "fake" change between Kaby Lake and Coffee Lake (IIRC, some industrial mobo maker actually produced a board that could support CPUs all the way from Skylake to Coffee Lake R).

Oxford Guy - Friday, November 5, 2021 - link

So...Are we no longer going to see all the benchmark hype over AVX-512 in consumer CPU reviews?

mode_13h - Friday, November 5, 2021 - link

I think we will. They devoted a whole page to it, and benchmarked it anyway.And Ian's 3DPM benchmark is still a black box. No one knows precisely what it measures, or that it casts AVX2 performance in a fair light. I will call for him to opensource it, for as long as he continues using it.

alpha754293 - Friday, November 5, 2021 - link

Note for the editorial team:On the page titled: "Power: P-Core vs E-Core, Win10 vs Win11" (4th page), the last graph on the page has AMD Ryzen 9 5900X twice.

The second 5900X I think is supposed to be the 5950X.

Just letting you know.

Thanks.

GeoffreyA - Friday, November 5, 2021 - link

For those proclaiming the funeral of AMD, remember, this is like a board game. Intel is now ahead. When AMD moves, they'll be ahead. Ad infinitum.As for Intel, well done. Golden Cove is solid, and I'm glad to see a return to form. I expected Alder Lake to be a disaster, but this was well executed. Glad, too, it's beating AMD. For the sake of pricing and IPC, we need the two to give each other a good hiding, alternately. Next, may Zen 3+ and 4 send Alder and Raptor back to the dinosaur age! And may Intel then thrash Zen 4 with whatever they're baking in their labs! Perhaps this is the sort of tick-tock we need.

mode_13h - Friday, November 5, 2021 - link

> Intel is now ahead.Let's see how it performs within the same power envelope as AMD. That'll tell us if they're truly ahead, or if they're still dependent on burning more Watts for their performance lead.

GeoffreyA - Saturday, November 6, 2021 - link

Oh yes. Under the true metric of performance per watt, AMD is well ahead, Alder Lake taking something to the effect of 60-95% more power to achieve 10-20% more performance than Ryzen. And under that light, one would argue it's not a success. Still, seeing all those blue bars, I give it the credit and feel it won the day.Unfortunately for Intel and fans, this is not the end of AMD. Indeed, Lisa Su and her team should celebrate: Golden Cove shows just how efficient Zen is. A CPU with 4-wide decode and 256-entry ROB, among other things, is on the heels of one with 6-wide decode and a 512-entry ROB. That ought to be troubling to Pat and the team. Really, the only way I think they can remedy this is by designing a new core from scratch or scaling Gracemont to target Zen 4 or 5.