SSD versus Enterprise SAS and SATA disks

by Johan De Gelas on March 20, 2009 2:00 AM EST- Posted in

- IT Computing

I/O Meter Performance

IOMeter is an open source (originally developed by Intel) tool that can measure I/O performance in almost any way you can imagine. You can test random or sequential accesses (or a combination of the two), read or write operations (or a combination of the two), in blocks from a few KB to several MB. Whatever the goal, IOMeter can generate a workload and measure how fast the I/O system performs.

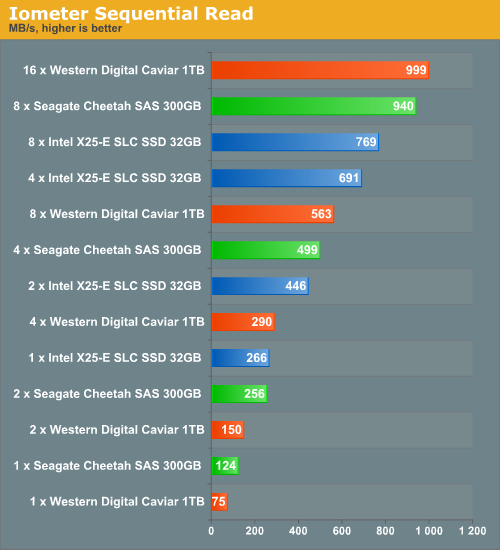

First, we evaluate the best scenario a magnetic disk can dream of: purely sequential access to a 20GB file. We are forced to use a relatively small file as our SLC SSD drives are only 32GB. Again, that's the best scenario imaginable for our magnetic disks, as we use only the outer tracks that have the most sectors and thus the highest sustained transfer rates.

The Intel SLC SSD delivers more than it promised: we measure 266 MB/s instead of the promised 250 MB/s. Still, purely sequential loads do not make the expensive and small SSD disks attractive: it takes only two SAS disks or four SATA disks to match one SLC SSD. As the SAS disks are 10 times larger and the SATA drives 30 times, it is unlikely that we'll see a video streaming fileserver using SSDs any time soon.

Our Adaptec controller is clearly not taking full advantage of the SLC SSD's bandwidth: we only see a very small improvement going from four to eight disks. We assume that this is a SATA related issue, as eight SAS disks have no trouble reaching almost 1GB/s. This is the first sign of a RAID controller bottleneck. However, you can hardly blame Adaptec for not focusing on reaching the highest transfer rates with RAID 0: it is a very rare scenario in a business environment. Few people use a completely unsafe eight drive RAID 0 set and it is only now that there are disks capable of transferring 250 MB/s and more.

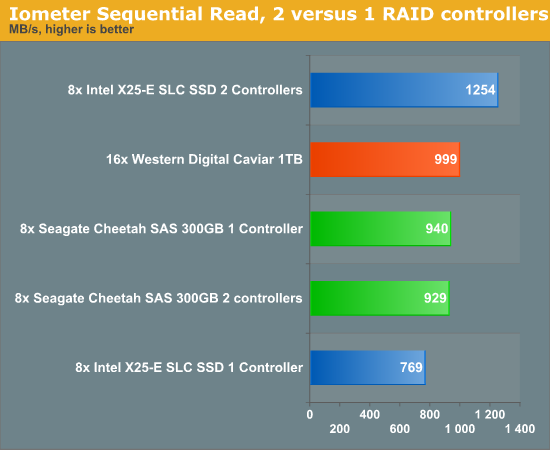

The 16 SATA disks reach the highest transfer rate with two of our Adaptec controllers. To investigate the impact of the RAID controller a bit further, we attached four of our SLC drives to one Adaptec controller and four on another one. First is a picture of the setup, and then the results.

The results are quite amazing: performance improves more than 60% with four SSDs on two controllers compared to eight X25-E SSDs on one controller. We end up with a RAID system that is capable of transferring 1.2GB/s.

67 Comments

View All Comments

Rasterman - Monday, March 23, 2009 - link

since the controller is the bottleneck for ssd and you have very fast cpus, did you try testing a full software raid array, just leave the controllers out of it all together?.Snarks - Sunday, March 22, 2009 - link

reading the comments made my brain asplode D:!Damn it, it's way to late for this!

pablo906 - Saturday, March 21, 2009 - link

I've loved the stuff you put out for a long long time. This another piece of quality work. I definitely appreciate the work you put into this stuff. I was thinking about how I was going to build the storage back end for a small/medium virtualization platform and this is definitely swaying some of my previous ideas. It really seems like an EMC enclosure may be in our future instead of a something built by me on a 24 Port Areca Card.I don't know what all the hubub was about at the beginning of the article but I can tell you that I got what I needed. I'd like to see some follow ups in Server Storage and definitely more Raid 6 info. Any chance you can do some serious Raid Card testing, that enclosure you have is perfect for it (I've built some pretty serious storage solutions out of those and 24 port Areca cards) and I'd really like to see different cards and different configurations, numbers of drives, array types, etc. tested.

rbarone69 - Friday, March 20, 2009 - link

Great work on these benchmarks. I have found very few other sources that provided me with the answers to my questions regarding exaclty what you tested here (DETAILED ENOUGH FOR ME). This report will be referenced when we size some of our smaller (~40-50GB but heavily read) central databases we run within our enterprise.It saddens me to see people that simply will NEVER be happy, no matter what you publish to them for no cost to them. Fanatics have their place but generally cost organizations much more than open minded employees willing to work with what they have available.

JohanAnandtech - Saturday, March 21, 2009 - link

Thanks for your post. A "thumbs up" post like yours is the fuel that Tijl and I need to keep going :-). Defintely appreciated!classy - Friday, March 20, 2009 - link

Nice work and no question ssds are truly great performers, but I don't see them being mainstream for several more years in the enterprise world. One is no one knows how relaible they are? They are not tried and tested. Two and three go hand in hand, capapcity and cost. With the need for more and more storage, the cost for ssd makes them somewhat of a one trick pony, a lot of speed, but cost prohibitive. Just at our company we are looking at a seperate data domain just for storage. When you start tallking the need for several terabytes, ssd just isn't going to be considered. Its the future, but until they drastically reduce in cost and increase in capacity, their adoption will be minimal at best. I don't think speed right now trumps capacity in the enterprise world.virtualgeek - Friday, March 27, 2009 - link

They are well past being "untried" in the enterprise - and we are now shipping 400GB SLC drives.gwolfman - Friday, March 20, 2009 - link

[quote]Our Adaptec controller is clearly not taking full advantage of the SLC SSD's bandwidth: we only see a very small improvement going from four to eight disks. We assume that this is a SATA related issue, as eight SAS disks have no trouble reaching almost 1GB/s. This is the first sign of a RAID controller bottleneck.[/quote]I have an Adaptec 3805 (previous generation as to the one you used) that I used to test 4 of OCZ's first SSDs when they came out and I noticed this same issue as well. I went through a lengthy support ticket cycle and got little help and no answer to the explanation. I was left thinking it was the firmware as 2 SAS drives had a higher throughput than the 4 SSDs.

supremelaw - Friday, March 20, 2009 - link

For the sake of scientific inquiry primarily, but not exclusively,another experimental "permutation" I would also like to see is

a comparison of:

(1) 1 x8 hardware RAID controller in a PCI-E 2.0 x16 slot

(2) 1 x8 hardware RAID controller in a PCI-E 1.0 x16 slot

(3) 2 x4 hardware RAID controllers in a PCI-E 2.0 x16 slot

(4) 2 x4 hardware RAID controllers in a PCI-E 1.0 x16 slot

(5) 2 x4 hardware RAID controllers in a PCI-E 2.0 x4 slot

(6) 2 x4 hardware RAID controllers in a PCI-E 1.0 x4 slot

(7) 4 x1 hardware RAID controllers in a PCI-E 2.0 x1 slot

(8) 4 x1 hardware RAID controllers in a PCI-E 1.0 x1 slot

* if x1 hardware RAID controllers are not available,

then substitute x1 software RAID controllers instead,

to complete the experimental matrix.

If the controllers are confirmed to be the bottlenecks

for certain benchmarks, the presence of multiple I/O

processors -- all other things being more or less equal --

should tell us that IOPs generally need more horsepower,

particularly when solid-state storage is being tested.

Another limitation to face is that x1 PCI-E RAID controllers

may not work in multiples installed in the same motherboard

e.g. see Highpoint's product here:

http://www.newegg.com/Product/Product.aspx?Item=N8...">http://www.newegg.com/Product/Product.aspx?Item=N8...

Now, add different motherboards to the experimental matrix

above, because different chipsets are known to allocate

fewer PCI-E lanes even though slots have mechanically more lanes

e.g. only x4 lanes actually assigned to an x16 PCI-E slot.

MRFS

supremelaw - Friday, March 20, 2009 - link

More complete experimental matrix (see shorter matrix above):(1) 1 x8 hardware RAID controller in a PCI-E 2.0 x16 slot

(2) 1 x8 hardware RAID controller in a PCI-E 1.0 x16 slot

(3) 2 x4 hardware RAID controllers in a PCI-E 2.0 x16 slot

(4) 2 x4 hardware RAID controllers in a PCI-E 1.0 x16 slot

(5) 1 x8 hardware RAID controllers in a PCI-E 2.0 x8 slot

(6) 1 x8 hardware RAID controllers in a PCI-E 1.0 x8 slot

(7) 2 x4 hardware RAID controllers in a PCI-E 2.0 x8 slot

(8) 2 x4 hardware RAID controllers in a PCI-E 1.0 x8 slot

(9) 2 x4 hardware RAID controllers in a PCI-E 2.0 x4 slot

(10) 2 x4 hardware RAID controllers in a PCI-E 1.0 x4 slot

(11) 4 x1 hardware RAID controllers in a PCI-E 2.0 x1 slot

(12) 4 x1 hardware RAID controllers in a PCI-E 1.0 x1 slot

MRFS