The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTIntel never quite reached 4GHz with the Pentium 4. Despite being on a dedicated quest for gigahertz the company stopped short and the best we ever got was 3.8GHz. Within a year the clock (no pun intended) was reset and we were all running Core 2 Duos at under 3GHz. With each subsequent generation Intel inched those clock speeds higher, but preferred to gain performance through efficiency rather than frequency.

Today, Intel quietly finishes what it started nearly a decade ago. When running a single threaded application, the Core i7-2600K will power gate three of its four cores and turbo the fourth core as high as 3.8GHz. Even with two cores active, the 32nm chip can run them both up to 3.7GHz. The only thing keeping us from 4GHz is a lack of competition to be honest. Relying on single-click motherboard auto-overclocking alone, the 2600K is easily at 4.4GHz. For those of you who want more, 4.6-4.8GHz is within reason. All on air, without any exotic cooling.

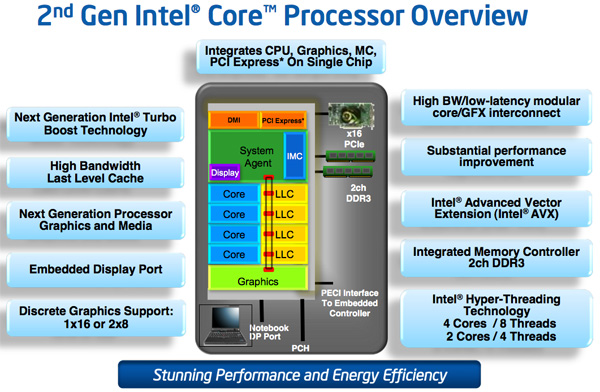

Unlike Lynnfield, Sandy Bridge isn’t just about turbo (although Sandy Bridge’s turbo modes are quite awesome). Architecturally it’s the biggest change we’ve seen since Conroe, although looking at a high level block diagram you wouldn’t be able to tell. Architecture width hasn’t changed, but internally SNB features a complete redesign of the Out of Order execution engine, a more efficient front end (courtesy of the decoded µop cache) and a very high bandwidth ring bus. The L3 cache is also lower and the memory controller is much faster. I’ve gone through the architectural improvements in detail here. The end result is better performance all around. For the same money as you would’ve spent last year, you can expect anywhere from 10-50% more performance in existing applications and games from Sandy Bridge.

I mentioned Lynnfield because the performance mainstream quad-core segment hasn’t seen an update from Intel since its introduction in 2009. Sandy Bridge is here to fix that. The architecture will be available, at least initially, in both dual and quad-core flavors for mobile and desktop (our full look at mobile Sandy Bridge is here). By the end of the year we’ll have a six core version as well for the high-end desktop market, not to mention countless Xeon branded SKUs for servers.

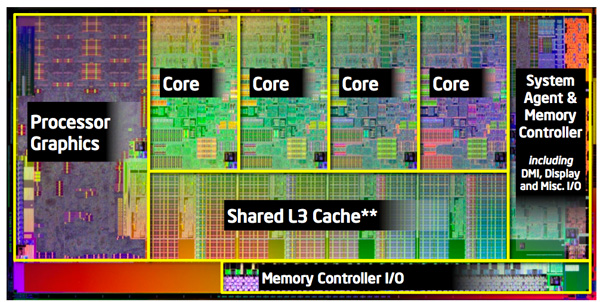

The quad-core desktop Sandy Bridge die clocks in at 995 million transistors. We’ll have to wait for Ivy Bridge to break a billion in the mainstream. Encompassed within that transistor count are 114 million transistors dedicated to what Intel now calls Processor Graphics. Internally it’s referred to as the Gen 6.0 Processor Graphics Controller or GT for short. This is a DX10 graphics core that shares little in common with its predecessor. Like the SNB CPU architecture, the GT core architecture has been revamped and optimized to increase IPC. As we mentioned in our Sandy Bridge Preview article, Intel’s new integrated graphics is enough to make $40-$50 discrete GPUs redundant. For the first time since the i740, Intel is taking 3D graphics performance seriously.

| CPU Specification Comparison | ||||||||

| CPU | Manufacturing Process | Cores | Transistor Count | Die Size | ||||

| AMD Thuban 6C | 45nm | 6 | 904M | 346mm2 | ||||

| AMD Deneb 4C | 45nm | 4 | 758M | 258mm2 | ||||

| Intel Gulftown 6C | 32nm | 6 | 1.17B | 240mm2 | ||||

| Intel Nehalem/Bloomfield 4C | 45nm | 4 | 731M | 263mm2 | ||||

| Intel Sandy Bridge 4C | 32nm | 4 | 995M | 216mm2 | ||||

| Intel Lynnfield 4C | 45nm | 4 | 774M | 296mm2 | ||||

| Intel Clarkdale 2C | 32nm | 2 | 384M | 81mm2 | ||||

| Intel Sandy Bridge 2C (GT1) | 32nm | 2 | 504M | 131mm2 | ||||

| Intel Sandy Bridge 2C (GT2) | 32nm | 2 | 624M | 149mm2 | ||||

It’s not all about hardware either. Game testing and driver validation actually has real money behind it at Intel. We’ll see how this progresses over time, but graphics at Intel today very different than it has ever been.

Despite the heavy spending on an on-die GPU, the focus of Sandy Bridge is still improving CPU performance: each core requires 55 million transistors. A complete quad-core Sandy Bridge die measures 216mm2, only 2mm2 larger than the old Core 2 Quad 9000 series (but much, much faster).

As a concession to advancements in GPU computing rather than build SNB’s GPU into a general purpose compute monster Intel outfitted the chip with a small amount of fixed function hardware to enable hardware video transcoding. The marketing folks at Intel call this Quick Sync technology. And for the first time I’ll say that the marketing name doesn’t do the technology justice: Quick Sync puts all previous attempts at GPU accelerated video transcoding to shame. It’s that fast.

There’s also the overclocking controversy. Sandy Bridge is all about integration and thus the clock generator has been moved off of the motherboard and on to the chipset, where its frequency is almost completely locked. BCLK overclocking is dead. Thankfully for some of the chips we care about, Intel will offer fully unlocked versions for the enthusiast community. And these are likely the ones you’ll want to buy. Here’s a preview of what’s to come:

The lower end chips are fully locked. We had difficulty recommending most of the Clarkdale lineup and I wouldn’t be surprised if we have that same problem going forward at the very low-end of the SNB family. AMD will be free to compete for marketshare down there just as it is today.

With the CPU comes a new platform as well. In order to maintain its healthy profit margins Intel breaks backwards compatibility (and thus avoids validation) with existing LGA-1156 motherboards, Sandy Bridge requires a new LGA-1155 motherboard equipped with a 6-series chipset. You can re-use your old heatsinks however.

Clarkdale (left) vs. Sandy Bridge (right)

The new chipset brings 6Gbps SATA support (2 ports) but still no native USB 3.0. That’ll be a 2012 thing it seems.

283 Comments

View All Comments

auhgnist - Monday, January 17, 2011 - link

For example, between i3-2100 and i7-2600?timminata - Wednesday, January 19, 2011 - link

I was wondering, does the integrated GPU provide any benefit if you're using it with a dedicated graphics card anyway (GTX470) or would it just be idle?James5mith - Friday, January 21, 2011 - link

Just thought I would comment with my experience. I am unable to get bluray playback, or even CableCard TV playback with the Intel integrated graphics on my new I5-2500K w/ Asus Motherboard. Why you ask? The same problem Intel has always had, it doesn't handle the EDID's correctly when there is a receiver in the path between it and the display.To be fair, I have an older Westinghouse Monitor, and an Onkyo TX-SR606. But the fact that all I had to do was reinstall my HD5450 (which I wanted to get rid of when I did the update to SandyBridge) and all my problems were gone kind of points to the fact that Intel still hasn't gotten it right when it comes to EDID's, HDCP handshakes, etc.

So sad too, because otherwise I love the upgraded platform for my HTPC. Just wish I didn't have to add-in the discrete graphics.

palenholik - Wednesday, January 26, 2011 - link

As i could understand from article, you have used just this one software for all these testings. And I understand why. Is it enough to conclude that CUDA causes bad or low picture quality.I am very interested and do researches over H.264 and x264 encoding and decoding performance, especially over GPU. I have tested Xilisoft Video Converter 6, that supports CUDA, and i didn't problems with low quality picture when using CUDA. I did these test on nVidia 8600 GT and for TV station that i work for. I was researching for solution to compress video for sending over internet with low or no quality loss.

So, could it be that Arcsoft Media Converter co-ops bad with CUDA technology?

And must notice here how well AMD Phenom II x6 performs well comparable to nVidia GTX 460. This means that one could buy MB with integrated graphics and AMD Phenom II x6 and have very good encoding performances in terms of speed and quality. Though, Intel is winner here no doubt, but jumping from sck. to sck. and total platform changing troubles me.

Nice and very useful article.

ellarpc - Wednesday, January 26, 2011 - link

I'm curious why bad company 2 gets left out of Anand's CPU benchmarks. It seems to be a CPU dependent game. When I play it all four cores are nearly maxed out while my GPU barely reaches 60% usage. Where most other games seem to be the opposite.Kidster3001 - Friday, January 28, 2011 - link

Nice article. It cleared up much about the new chips I had questions on.A suggestion. I have worked in the chip making business. Perhaps you could run an article on how bin-splits and features are affected by yields and defects. Many here seem to believe that all features work on all chips (but the company chooses to disable them) when that is not true. Some features, such as virtualization, are excluded from SKU's for a business reason. These are indeed disabled by the manufacturer inside certain chips (they usually use chips where that feature is defective anyway, but can disable other chips if the market is large enough to sell more). Other features, such as less cache or lower speeds are missing from some SKU's because those chips have a defect which causes that feature to not work or not to run as fast in those chips. Rather than throwing those chips away, companies can sell them at a cheaper price. i.e. Celeron -> 1/2 the cache in the chip doesn't work right so it's disabled.

It works both ways though. Some of the low end chips must come from better chips that have been down-binned, otherwise there wouldn't be enough low-end chips to go around.

katleo123 - Tuesday, February 1, 2011 - link

It is not expected to compete Core i7 processors to take its place.Sandy bridge uses fixed function processing to produce better graphics using the same power consumption as Core i series.

visit http://www.techreign.com/2010/12/intels-sandy-brid...

jmascarenhas - Friday, February 4, 2011 - link

Problem is we need to choose between using integrated GPU where we have to choose a H67 board or do some over clocking with a P67. I wonder why we have to make this option... this just means that if we dont do gaming and the 3000 is fine we have to go for the H67 and therefore cant OC the processor.....jmascarenhas - Monday, February 7, 2011 - link

and what about those who want to OC and dont need a dedicated Graphic board??? I understand Intel wanting to get money out of early adopters, but dont count on me.fackamato - Sunday, February 13, 2011 - link

Get the K version anyway? The internal GPU gets disabled when you use an external GPU AFAIK.