NVIDIA Tegra 4 Architecture Deep Dive, Plus Tegra 4i, Icera i500 & Phoenix Hands On

by Anand Lal Shimpi & Brian Klug on February 24, 2013 3:00 PM ESTEver since NVIDIA arrived on the SoC scene, it has done a great job of introducing its ultra mobile SoCs. Tegra 2 and 3 were both introduced with a healthy amount of detail and the sort of collateral we expect to see from any PC silicon vendor. While the rest of the mobile space is slowly playing catchup, NVIDIA continued the trend with its Tegra 4 and Tegra 4i architecture disclosure.

Since Tegra 4i is a bit further out, much of NVIDIA’s focus for today’s disclosure focused on its flagship Tegra 4 SoC due to begin shipping in Q2 of this year along with the NVIDIA i500 baseband. At a high level you’re looking at a quad-core ARM Cortex A15 (plus fifth A15 companion core) and a 72-core GeForce GPU. To understand Tegra 4 at a lower level, we’ll dive into the individual blocks beginning, as usual with the CPU.

ARM’s Cortex A15 and Power Consumption

Tegra 4’s CPU complex sees a significant improvement over Tegra 3. Despite being an ARM architecture licensee, NVIDIA once again licensed a complete processor from ARM rather than designing its own core. I do fundamentally believe that NVIDIA will go the full custom route eventually (see: Project Denver), but that’s a goal that will take time to come to fruition.

In the case of Tegra 4, NVIDIA chose to license ARM’s Cortex A15 - the only vanilla ARM core presently offered that can deliver higher performance than a Cortex A9.

Samsung recently disclosed details about its Cortex A15 implementation compared to the Cortex A7, a similarly performing but more power efficient alternative to the A9. In its ISSCC paper on the topic Samsung noted that the Cortex A15 offered up to 3x the performance of the Cortex A7, at 4x the area and 6x the power consumption. It’s a tremendous performance advantage for sure, but it comes at a great cost to area and power consumption. The area side isn’t as important as NVIDIA has to eat that cost, but power consumption is a valid concern.

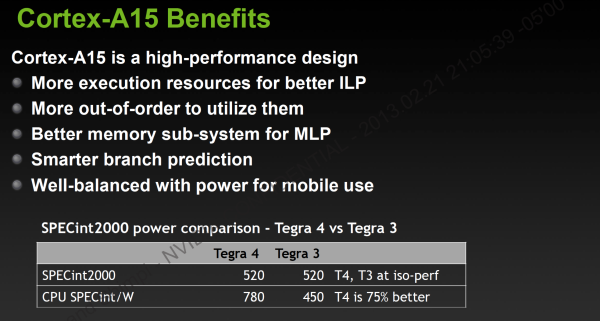

To ease fears about power consumption, NVIDIA provided the following data:

The table above is a bit confusing so let me explain. In the first row NVIDIA is showing that it has configured the Tegra 3 and 4 platforms to deliver the same SPECint_base 2000 performance. SPECint is a well respected CPU benchmark that stresses everything from the CPU core to the memory interface. The int at the end of the name implies that we’re looking at purely single threaded integer performance.

The second row shows us the SPECint per watt of the Tegra 3/4 CPU subsystem, when running at the frequencies required to deliver a SPECint score of 520. By itself this doesn’t tell us a whole lot, but we can use this data to get some actual power numbers.

At the same performance level, Tegra 4 operates at 40% lower power than Tegra 3. The comparison is unfortunately not quite apples to apples as we’re artificially limiting Tegra 4’s peak clock speed, while running Tegra 3 at its highest, most power hungry state. The clocks in question are 1.6GHz for Tegra 3 and 825MHz for Tegra 4. Running at lower clocks allows you to run at a lower voltage, which results in much lower power consumption. In other words, NVIDIA’s comparison is useful but skewed in favor of Tegra 4.

What this data does tell us however is exactly how NVIDIA plans on getting Tegra 4 into a phone: by aggressively limiting frequency. If a Cortex A15 at 825MHz delivers identical performance at a lower power compared to a 40nm Cortex A9 at 1.6GHz, it’s likely possible to deliver a marginal performance boost without breaking the power bank.

That 825MHz mark ends up being an important number, because that’s where the fifth companion Cortex A15 tops out at. I suspect that in a phone configuration NVIDIA might keep everything running on the companion core for as long as possible, which would address my fears about typical power consumption in a phone. Peak power consumption is still going to be a problem I think.

75 Comments

View All Comments

TheJian - Monday, February 25, 2013 - link

http://www.anandtech.com/show/6472/ipad-4-late-201...ipad4 scored 47 vs. 57 for T4 in egypt hd offscreen 1080p. I'd say it's more than competitive with ipad4. T4 scores 2.5x iphone5 in geekbench (4148 vs. 1640). So it's looking like it trumps A6 handily.

http://www.pcmag.com/article2/0,2817,2415809,00.as...

T4 should beat 600 in Antutu and browsermark. IF S800 is just an upclocked cpu and adreno 330 this is going to be a tight race in browsermark and a total killing for NV in antutu. 400mhz won't make up the 22678 for HTC ONE vs. T4's 36489. It will fall far short in antutu unless the gpu means a lot more than I think in that benchmark. I don't think S600 will beat T4 in anything. HTC ONE only uses 1.7ghz the spec sheet at QCOM says it can go up to 1.9ghz but that won't help from the beating it took according to pcmag. They said this:

"The first hint we've seen of Qualcomm's new generation comes in some benchmarks done on the HTC One, which uses Qualcomm's new 1.7-GHz Snapdragon 600 chipset - not the 800, but the next notch down. The Tegra 4 still destroys it."

Iphone5 got destroyed too. Geekbench on T4=4148 vs. iphone5=1640. OUCH.

Note samsung/qualcomm haven't let PC mag run their own benchmarks on Octa or S800. Nvidia is showing now signs of fear here. Does anyone have data on the cpu in Snapdragon800? Is the 400cpu in it just a 300cpu clocked up 400mhz because of the process or is it actually a different core? It kind of looks like this is just 400mhz more on the cpu with an adreno330 on top instead of 320 of S600.

http://www.qualcomm.com/snapdragon/processors/800-...

https://www.linleygroup.com/newsletters/newsletter...

"The Krait 300 provides new microarchitecture improvements that increase per-clock performance by 10–15% while pushing CPU speed from 1.5GHz to 1.7GHz. The Krait 400 extends the new microarchitecture to 2.3GHz by switching to TSMC's high-k metal gate (HKMG) process."

Anyone have anything showing the cpu is MORE than just 400mhz more on a new process? This sounds like no change in the chip itself. That article was Jan23 and Gwennap is pretty knowledgeable. Admittedly I didn't do a lot of digging yet (can't find much on 800 cpu specs, did most of my homework on S600 since it comes first).

We need some Rogue 6 data now too :) Lots of post on the G6100 in the last 18hrs...Still reading it all... ROFL (MWC is causing me to do a lot of reading today...). About 1/2 way through and most of it seems to just brag about opengl es3.0 and DX11.1 (not seeing much about perf). I'm guessing because NV doesn't have it on T4 :) It's not used yet, so I don't care but that's how I'd attack T4 in the news ;) Try running something from DX11.1 on a soc and I think we'll see a slide show (think crysis3 on a soc...LOL). I'd almost say the same for all of es3.0 being on. NV was wise to save die space here and do a simpler chip that can undercut prices of others. They're working on DX9_3 features in WinRT (hopefully MS will allow it). OpenGL ES3.0 & DX11.1 will be more important next xmas. Game devs won't be aiming at $600 phones for their games this xmas, they'll aim at mass market for the most part just like on a pc (where they aim at consoles DX9, then we get ports...LOL). It's a rare game that's aimed at GXT680/7970ghz and up. Crysis3? Most devs shoot far lower.

http://www.imgtec.com/corporate/newsdetail.asp?New...

No perf bragging just features...Odd while everyone else brags vs. their own old versions or other chips.

Qcom CMO goes all out:

http://www.phonearena.com/news/Qualcomm-CMO-disses...

"Nvidia just launched their Tegra 4, not sure when those will be in the market on a commercial basis, but we believe our Snapdragon 600 outperforms Nvidia’s Tegra 4. And we believe our Snapdragon 800 completely outstrips it and puts a new benchmark in place.

So, we clean Tegra 4′s clock. There’s nothing in Tegra 4 that we looked at and that looks interesting. Tegra 4 frankly, looks a lot like what we already have in S4 Pro..."

OOPS...I guess he needs to check the perf of tegra4 again. PCmag shows he's 600 chip got "DESTROYED" and all other competition "crushed". Why is Imagination not bragging about perf of G6100? Is it all about feature/api's without much more power? Note that page from phonearena is having issues (their server is) as I had to get it out of google cache just now from earlier. He's a marketing guy from Intel so you know, a "blue crystals" kind of guy :) The CTO would be bragging about perf I think if he had it. Anand C is fluff marketing guy from Intel (but he has a masters in engineering, he's just marketing it appears now and NOT throwing around data just "i believe" comments). One last note, Exynos octa got kicked out of Galaxy S4 because it overheated the phone according to the same site. So Octa is tablet only I guess? Galaxy S4 is a superphone and octa didn't work in it if what they said is true (rumored 1.9ghz rather than 1.7ghz HTC ONE version).

fteoath64 - Wednesday, February 27, 2013 - link

@TheJian: "ipad4 scored 47 vs. 57 for T4 in egypt hd offscreen 1080p. I'd say it's more than competitive with ipad4. T4 scores 2.5x iphone5 in geekbench (4148 vs. 1640). So it's looking like it trumps A6 handily."Good reference!. This shows T4 doing what it ought to in the tablet space as Apple's CPU release cycle tends to be 12 to 18 months, giving Nvidia lots of breathing room. Also besides, since Qualcomm launched all their new ranges, the next cycle is going to be a while. However, Qualcomm has so many design wins on their Snapdragons, it leaves little room for Nvidia and others to play. Is this why TI went out of this market ?. So could Amazon be candidate for T4i on their next tablet update ?.

PS: The issue with Apple putting quad PVR544 into iPad was to ensure the performance overall with retina is up to par with the non-retina version. Especially the Mini which is among the fastest tablet out there considering it needs to push less than a million pixels yet delivering a good 10 hours of use.

mayankleoboy1 - Tuesday, February 26, 2013 - link

Hey AnandTech, you never told us what is the "Project Thor" , that JHH let slip at CES..CeriseCogburn - Thursday, February 28, 2013 - link

This is how it goes for nVidia from, well we know whom at this point, meaning, it appears everyone here." I have to give NVIDIA credit, back when it introduced Tegra 3 I assumed its 4+1 architecture was surely a gimmick and to be very short lived. I remember asking NVIDIA’s Phil Carmack point blank at MWC 2012 whether or not NVIDIA would standardize on four cores for future SoCs. While I expected a typical PR response, Phil surprised me with an astounding yes. NVIDIA was committed to quad-core designs going forward. I still didn’t believe it, but here we are in 2013 with NVIDIA’s high-end and mainstream roadmaps both exclusively featuring quad-core SoCs. NVIDIA remained true to its word, and the more I think about it, the more the approach makes sense."

paraphrased: " They're lying to me, they lie, lie ,lie ,lie ,lie. (pass a year or two or three) Oh my it wasn't a lie. "

Rinse and repeat often and in overlapping fashion.

Love this place, and no one learns.

Here's a clue: It's AMD that has been lying it's yapper off to you for years on end.

Origin64 - Tuesday, March 12, 2013 - link

Wow. 120mbps LTE? I get a fifth of that through a cable at home.