AMD Launches Carrizo: The Laptop Leap of Efficiency and Architecture Updates

by Ian Cutress on June 2, 2015 9:00 PM ESTThe Platform

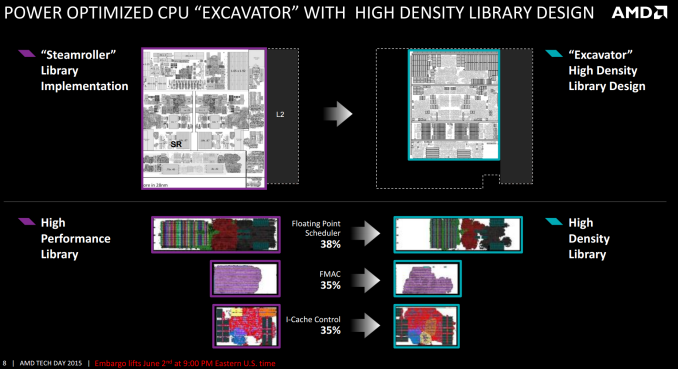

From a design perspective, Carrizo is the biggest departure to AMD’s APU line since the introduction of Bulldozer cores. While the underlying principle of two INT pipes and a shared FP pipe between dual schedulers is still present, the fundamental design behind the cores, the caches and the libraries have all changed. Part of this was covered at ISSCC, which we will also revisit here.

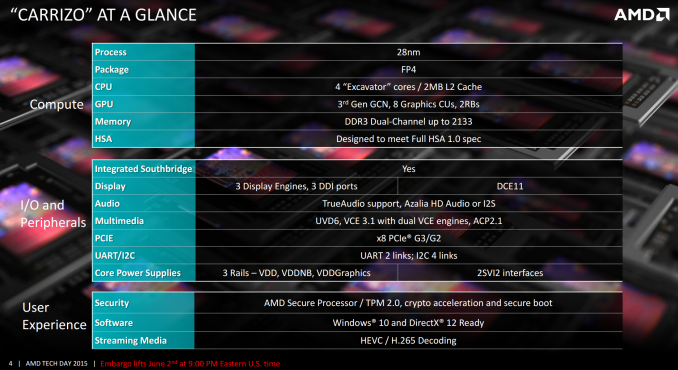

On a high level, Carrizo will be made at the 28nm node using a similar silicon tapered metal stack more akin to a GPU design rather than a CPU design. The new FP4 package will be used, but this will be shared with Carrizo-L, the new but currently unreleased lower-powered ‘Cat’ core based platform that will play in similar markets for lower cost systems. The two FP4 models are designed to be almost plug-and-play, simplifying designs for OEMs. All Carrizo APUs currently have four Excavator cores, more commonly referred to as a dual module design, and as a result the overall design will have 2MB of L2 cache.

Each Carrizo APU will feature AMD’s Graphics Core Next 1.2 architecture, listed above as 3rd Gen GCN, with up to 512 streaming processors in the top end design. Memory will still be dual channel, but at DDR3-2133. As noted in the previous slides where AMD tested on DDR3-1600, probing the memory power draw and seeing what OEMs decide to use an important aspect we wish to test. In terms of compute, AMD states that Carrizo is designed to meet the full HSA 1.0 specification as was released earlier this year. Barring any significant deviations in the specification, AMD expects Carrizo to be certified when the final version is ratified.

Carrizo integrates the southbridge/IO hub into the silicon design of the die itself, rather than a separate on package design. This brings the southbridge down from 40nm+ to 28nm, saving power and reducing long distance wires between the processor and the IO hub. This also allows the CPU to control the voltage and frequency of the southbridge more than before, offering further potential power saving improvements. Carrizo will also support three displays, allowing for potentially interesting combinations when it comes to more office oriented products and docks. TrueAudio is also present, although the number of titles that support it is few and the quality of both audio codecs and laptop speakers leaves a lot to be desired. Hopefully we will see the TrueAudio DSP opened up in an SDK at some point, allowing more than just specific developers to work with it.

External graphics is supported by a PCIe 3.0 x8 interface, and the system relies on three main rails for voltage across the SoC which allows for separate voltage binning of each of the parts. AMD’s Secure Processor, with cryptography acceleration, secure boot and BitLocker support are all in the mix.

137 Comments

View All Comments

Refuge - Wednesday, June 3, 2015 - link

Built my mother a new system from her old scraps with a new A8, she loves that desktop, and when she put an SSD in it finally she loved it ten times more. the upgrade only cost her $300, for CPU, Mobo, RAM. Threw it together in 45 minutes, and she hasn't had a problem with it in 2 years so far.nathanddrews - Wednesday, June 3, 2015 - link

I prefer the following setup:1. Beast-mode, high-performance desktop for gaming, video editing, etc.

2. Low-power, cheap notebook/tablet for In-Home Steam Streaming and light gaming (720p) on the go.

In my use case, as long as I can load and play the game (20-30fps for RTS, 30fps+ for everything else) on a plane ride or some other scenario without AC access, I'm not really concerned with the AA or texture quality. I still want to get the best experience possible, but balanced against the cheapest possible price. The sub-$300 range is ideal for me.

AS118 - Wednesday, June 3, 2015 - link

Yeah, that's my thing as well. High resolutions at work, and at home, 768p or 900p's just fine, especially for gaming.I also recommend AMD to friends and relatives who want laptops and stuff that can do casual gaming for cheap.

FlushedBubblyJock - Tuesday, June 9, 2015 - link

Why go amd when HD3000 does just fine gaming and the added power of the intel cpu is an awesome boost overall ...Valantar - Wednesday, June 3, 2015 - link

1366*768 on anything larger than 13" looks a mess, but in a cheap laptop I'd rather have a 13*7 IPS for the viewing angles and better visuals than a cheap FHD TN panel - bad viewing angles especially KILL the experience of using a laptop. Still, 13*7 is pretty lousy for anything other than multimedia - it's simply too short to fit a useful amount of text vertically. A decent compromise would be moving up to 1600*900 as the standard resolution on >11" displays. Or, of course, moving to 3:2 or 4:3 displays, which would make the resolution 1366*911 or 1366*1024 and provide ample vertical space. Still, 13*7 TN panels need to go away. Now.yankeeDDL - Wednesday, June 3, 2015 - link

Like I said, to each his own. I have a Lenovo Z50 which I paid less than $470 with the A10 7300.Quite frankly, I could not be happier and I think it provides a massive value for that money.

Sure, a larger battery and a better screen would not hurt, but for hustling it around the house, or bring it to friend/family house, watch movies, play games at native resolution, it is fantastic.

It's no road warrior, for sure (heavy and the battery life doesn't go much beyond 3hrs of "serious" use) but playing at 1366*768 on something that weights 5 pounds and costs noticeably less than $500, is quite amazing. Impossible on an Intel+discrete graphics, as far as I know.

FlushedBubblyJock - Tuesday, June 9, 2015 - link

Nope, HD3000 plays just fineMargalus - Wednesday, June 3, 2015 - link

I'd rather have a cheaper 15.6" 1366x768 TN panel over a more expensive smaller ips panel.UtilityMax - Wednesday, June 3, 2015 - link

1366x768 is fine for movies and games. But it's a bad resolution for reading text or viewing images on the web, since you see pixels the size of moon crater.BrokenCrayons - Thursday, June 4, 2015 - link

I understand there's going to be a variety of differing opinions on the idea of seeing individual pixels. As far as I'm concerned, seeing individual pixels isn't a dreadful or horrific thing. In fact, to me it simply doesn't matter. I'm busy living my meat world life and enjoying whatever moments I have with family and friends so I don't give the ability to discern an individual pixel so much as a second thought. It is an insignificant part of my life, but what isn't is the associated decline in battery life (on relative terms) required to drive additional, utterly unnecessary pixels and to push out sufficient light as a result of the larger multitude of them. That sort of thing is marginally annoying -- then again, I still just don't care that much one way or another aside from noticing that a lot of people are very much infatuated with an insignificant, nonsense problem.