MIPS Strikes Back: 64-bit Warrior I6400 Arrives

by Stephen Barrett on September 2, 2014 10:00 AM ESTMIPS Instruction Set: 64-bit Release 6

Computer processors accomplish tasks by following instructions. The processor, however, only understands instructions in a specific "language". The language of a processor is called its Instruction Set Architecture (ISA). The code sent to a processor must be in that ISA to be understood. It's similar to what would happen if someone proceeded to give me instructions in Portuguese: I unfortunately would have no idea how to execute them. When a program or operating system is authored and compiled, the compiler is parameterized to generate the 1s and 0s of binary code using a specific ISA.

In general, there are two types of ISAs. Complex Instruction Set Computing (CISC) and Reduced Instruction Set Computing (RISC). The difference between them being of course their relative complexity. In general, a RISC ISA contains significantly fewer instructions that are far simpler than a CISC ISA.

Despite its increased complexity, CISC actually predates RISC and was only named retroactively. CISC ISAs were a necessity when low level code (assembly) was often authored by hand, and compilation was crippled by dramatically less powerful compilers than those available today. Having higher level instructions in the ISA, such as looping, allowed simple compilers to extract sufficient performance and human assembly authors to write programs. The most popular CISC ISA ever written is the x86 ISA used in Intel, AMD, and VIA processors. Interestingly, these processors now use dedicated decoding hardware to actually translate CISC instructions into RISC instructions that are executed internally.

RISC ISAs push much of the instruction complexity into the code compiler. Instead of using instruction decode circuits inside the CPU core to translate complex instructions into simple ones, RISC processors operate directly on the simple instructions provided by the compiler. This benefit is somewhat offset as often code compiled for RISC ISAs is larger; it may take multiple RISC instructions for the equivalent CISC instruction. This holds true in computer science theory, as one of the first things taught is there is often a tradeoff between storage and efficiency. If there is a desire for increased efficiency, precompute items ahead of time and then store them. If you need to save storage (or reduce the memory footprint), compute items on-the-fly.

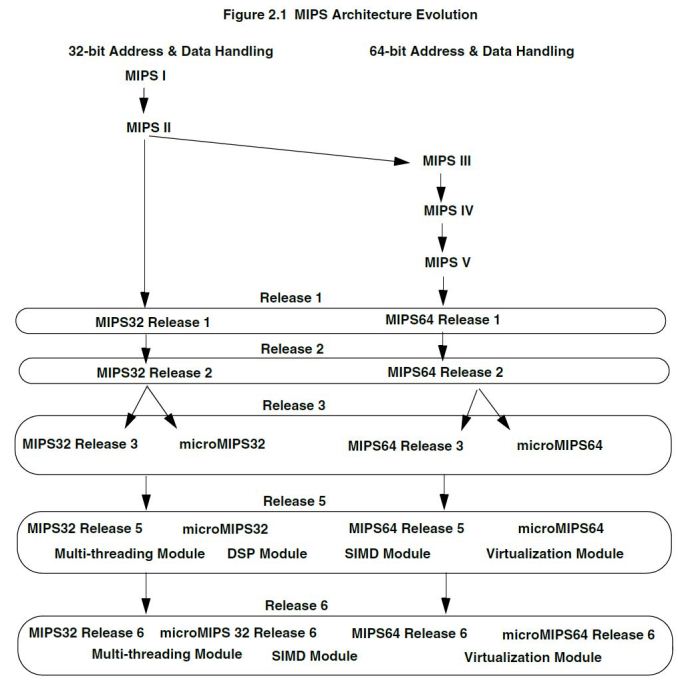

The most popular RISC ISA ever written is the ARM ISA. The MIPS ISA, like ARM, is RISC. It has been revised several times since its inception in 1985. The first five releases are named according to roman numerals I through V, and each was a super set of the last. In 1999, MIPS announced a large revision of the ISA which deprecated the old hierarchical I through V scheme and instead focused on two ISAs: MIPS32 and MIPS64.

Release 6 occurred in 2014 and the I6400 is the first CPU utilizing the new ISA. I won’t go through all the changes in the ISA, but the most significant is a culling of the instructions. Significant work was done to simplify the ISA by removing infrequently used instructions, in particular those that overlapped with Imagination’s PowerVR GPUs. Additional instructions were also added specifically targeting today’s applications like web browsers. The fruit of these instructions has recently been seen as Google Chrome’s V8 rendering engine added experimental support for MIPS64 release 6 in July.

In the MIPS programmer’s guide the release 6 ISA is actually referred to as MIPS3264 release 6. This naming is not by accident, as MIPS64 ISA is actually a direct superset of the MIPS32 ISA. In contrast to AMD64 (x86-64), there are no "operating modes" that dictate the bitness of instructions executed on the CPU but rather an entirely new set of instructions specifically for 64-bit. Registers inside the CPU are all 64bit, and when a 32-bit instruction executes, results saved in registers are sign-extended to the entire 64-bits of space. This means there is no mode switching, and 32-bit and 64-bit applications can coexist and even be executed using the same hardware resources like registers (more on this later).

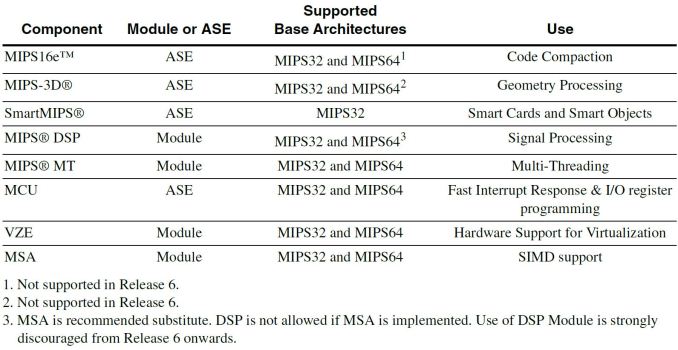

The MIPS ISA contains several optional instructions called Application Specific Extensions. These rely on optional portions of the CPU core that a licensee may or may not implement. Additionally, a MIPS CPU has optional modules that can enhance performance when paired with certain instructions.

Release 6 drops the legacy MIPS16e ASE as well as the redundant 3D ASE now that Imagination offers GPUs alongside MIPS CPUs.

MIPS CPUs in Mobile Devices

While MIPS CPUs are quite popular in networking equipment and many other embedded industries, consumers will likely only experience one firsthand when it's integrated into an Android handset. Since Android 4.0, Google has supported three ISAs: x86, ARM, and MIPS. Several devices have shipped running MIPS processors, most notably the low-cost Novo 7 tablet. MIPS devices will continue to be low cost alternative devices for now, but low cost devices have the largest volume. The volume should eventually help MIPS push app developers to address their #1 problem: compatibility.

Android applications are either written in Java, then compiled on the device to the specific required ISA before running (a processes called JIT compilation), or written in the Android Native Development Kit (NDK) to target a specific ISA. Apps written in Java can therefore run on any ISA that Android itself supports, including MIPS. Apps written with the NDK (many of which exist, especially games) cannot run on anything but the specific ISA they were written for. The Android NDK does allows packaging multiple ISA specific binaries into a single app, but with the vast majority of Android devices using ARM processors and therefore the ARM ISA, a multiple NDK Android app is simply uncommon.

What does this mean for an end user? There are many Android apps that simply won’t run if you have a MIPS processor in your device. Intel has the same NDK compatibility problem, but with their considerably larger engineering resources, Intel implemented a layer that translates ARM ISA applications to the Intel x86 ISA (albeit at a performance penalty). Until MIPS implements the same or ships enough volume to convince Android app developers to put in some extra work, a MIPS Android device will unfortunately be a second class experience.

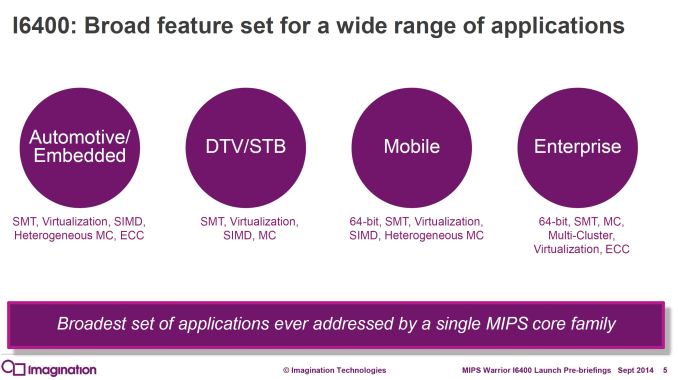

Despite some existing Android app compatibility woes, the MIPS I6400 CPU contains some interesting technology designed to address many more markets than handsets. In fact, Android usage of MIPS processors is really a minor part of the MIPS business. A few slides from the MIPS announcement indicate just how many other markets they are targeting.

84 Comments

View All Comments

Flunk - Tuesday, September 2, 2014 - link

Competition is always good, it will be interesting to see how these perform in real devices. The performance/power consumption offered by modern ARM processors is difficult to compete with.alexvoica - Tuesday, September 2, 2014 - link

I6400 offers better performance at lower power and reduced area vs. the competition. I have included some benchmarks in my article http://blog.imgtec.com/mips-processors/meet-mips-i...name99 - Tuesday, September 2, 2014 - link

I'm sorry but that article appears to be marketing crap.You state "Preliminary results for I6400 show that adding a second thread leads to performance increases of 40-50% on SPECint or CoreMark". So adding a second thread speeds up the SINGLE-THREADED version of SPEC? That's a neat trick.

Likewise you happily claim that multi-threading make a "big difference" to web browsing, something that will come as news to the many engineers on the WebKit, Blink and IE teams who have sweated blood over this without much to show for their efforts.

On your blog you can post whatever marketing fluff you like, but how about on AnandTech you limit yourself to actual numbers of real benchmarks?

(Sorry to be cruel but, christ, throwing raw ads into the comment stream and pretending they're informed comment pisses me off no end.)

alexvoica - Tuesday, September 2, 2014 - link

I might not be as versed as you are and excused me if I'm wrong (someone correct me if I am) but, as far as I know, SPEC supports multi-threading. Multi-threading really does improve performance - but don't take it from me, take it from our customers who are already using it in both 32- and 64-bit MIPS-based designs: Broadcom, Cavium, Lantiq - I could go on.I don't really understand how you can claim that my article is marketing fluff. It is marketing, yes. But doesn't every company have an official release? And doesn't part of that release include competitive positioning?

Let's not be behind-the-screen aggressive for behind-the-screen aggressiveness's sake. We have already offered a lot more information than our competitors, including benchmark data in CoreMark, DMIPS and SPECint.

name99 - Tuesday, September 2, 2014 - link

"We have already offered a lot more information than our competitors, including benchmark data in CoreMark, DMIPS and SPECint."Then why is the post full of claims, and basically numberless graphs, but not actual tables of numbers? Ooh, we're 1.3x faster than "competing CPU" --- that's helpful.

There's more information available in any AnandTech phone review.

Say what you like about nVidia, at least their HotChips Denver marketing slide gave numbers of a sort for Denver, compared to Baytrail, Krait-400, iPhone 5S and Haswell, all for a range of benchmarks (DMIPS, SPECInt2K and SPECFP2K, AnTuTu, Geekbench, Google Octane and some memory benchmarks). I think they were wrong to omit (definitely) SunSpider and (I care less) Kraken because SunSpider in particular gives a good feel for single-threaded performance on a large real-world code base. (SPECInt2K is a reasonable proxy, but stresses the uncore more than is probably usual for mobile devices.) Octane (and Kraken) are less interesting IMHO because they synthesize a workload that is vastly more parallelized than most actual websites.

(Of course I'd expect you to do better than nVidia, especially since you're the new kid on the block.

That means, for example, real numbers not scaled percentages;

it means running the benchmarks honestly --- using the optimal compiler plus flags for each device;

it means telling the public what those flags were so they can reproduce if necessary;

it means not playing games with cooling systems that aren't going to be used on a real device, or an OS power driver that does not match what will ship in real devices;

and it means using appropriate best of breed devices --- eg it's a bit slimy to use an iPhone 5S [1.3GHz] rather than iPad Air [1.4GHz] unless you have some damn good reason (like you're comparing against the phone version of your chip, not the tablet version.)

The code to be compiled to perform the SPECInt bechmark runs is not threaded. Sure, if your compiler is smart enough to auto-parallelize that code, it can go right ahead. Since no-one else's compiler has managed to achieve much by doing that, I kinda doubt MIPS has made a breakthrough here...

Multi-threading improves performance IF YOUR CODEBASE IS THREADED. My point is that the market that's being implied here (phones, tablets) is NOT substantially threaded.

There absolutely are markets (in many of which MIPS already does well, things like networking or cellular) where threading is important and of benefit. That doesn't change the fact that phones and tablets are not such a market, and pretending otherwise is not helpful to anyone.

alexvoica - Tuesday, September 2, 2014 - link

This is where you are wrong, no matter how much your finger gets stuck on caps lock. Programming for multithreading is not radically different than programming for multicore. In fact, Linux-SMP operating systems (e.g. Android) will see a dual-threaded CPU as two physical cores.Regarding your comments about benchmarks, I invite you to show me real, concrete numbers from our CPU IP competitor. We have said 5.6 CoreMark and 3.0 DMIPS per MHz. Now show me the data - and I am not interested in semiconductor manufacturers who are not our competitors but IP vendors.

The comparisons were made based on similar core configurations to ensure accuracy; how would you be able to reproduce them - are you an ARM licensee?

Wilco1 - Tuesday, September 2, 2014 - link

You've showed some numbers but not explained how they were made. As I said in my other post, MIPS uses a trick to get its CoreMark score, so any competitor result without the same trick will obviously look bad.And this is the issue with benchmarketing, unless it is possible to reproduce the score yourself, it is hard to believe any vendor-supplied scores.

name99 - Tuesday, September 2, 2014 - link

(a) Thanks for explaining SMT to stupid old me who's been in a coma for the past fifteen years and has never heard of the concept. Not sure WTF it has to do with my actual point about the dearth of threaded APPLICATIONS...(b) I'm not the guy trying to sell a CPU to the rest of the world, so I'm not sure why it's my job to provide numbers, but OK, here we go.

iPhone 5S at 1.3GHz gets a geekbench-singlecore rating of about 1300, and a sunspider rating (with iOS7) of 416. What do you have as closest equivalent numbers?

DMIPS --- give me a break. No-one cares about that because it tells you precisely nothing about anything hard that the CPU does. Coremark's slightly more interesting, but why don't you give some comparable CoreMark/MHz values so we can see what you consider to be your competitors.

I see, for example, that Exynos quad A9 claims a value of 15.89 and a dual-core A15 claims 9.36. Would you consider those competitors?

(As comparison, a single core A53 (at least the QC Snapdragon 410 variant) gets 3.7 according to AnandTech --- but 3.0 according to other sources so??? A57 is supposed to get 3.9, but who knows how trustworthy that number is.)

Assuming your 5.6 number is for multi-threaded operation, I'm going to do the naive thing and say that that tells me the single-threaded value is 2.8, which is apparently worse than an A53. If you don't like that arithmetic, then give us the single-threaded benchmark numbers, rather than trying to persuade us that phones are a great example of user-level multi-threaded software.

alexvoica - Wednesday, September 3, 2014 - link

Please understand that CoreMark does not work like that for multi-threading vs multicore.If you look at their website https://www.eembc.org/coremark/

PThreads refer to performance for both cores and/or threads - they do not specifically say which is which.

ARM scores are for multicore versions - this is why the CoreMark per MHz per core number is obtained by dividing that number by the number of PThreads. For example, for one Cortex-A15 you have 9.36 / 2 = 4.68 CoreMark/MHz. A single core proAptiv - which is a single-threaded design too - offers 5.1 CoreMark/MHz.

The number we've quoted for I6400 is 5.6 CoreMark/MHz. For multithreading however, you do not divide by number of threads since these are not individual CPUs but threads part of a single core. The score for a single core, single threaded I6400 is not half of 5.6. We specify very clearly in the press release/blog article that adding another thread improves performance by 40-50%, so your numbers are incorrect.

I still don't understand why you are pushing your agenda so aggressively and jump to conclusions since the data is clear. The author of the article chose to quote DMIPS, but I believe we have presented a valid combination of benchmarks and scenarios. Again, we are not competing with silicon manufacturers - some of them are licensees - but with other IP vendors.

Wilco1 - Wednesday, September 3, 2014 - link

I don't agree Dhrystone and CoreMark are valid benchmarks for CPU comparisons - both are easily cheated. You claim some great results but you know very well these are not indicative of actual CPU performance. Both benchmarks use special compiler tricks (like I mentioned in other posts) that only speedup these benchmarks, but nothing else. I bet SPEC scores are not nearly as good.Once again eg. NVidia actually posted real scores for lots of benchmarks of their SoCs, including SPEC. Do the same rather than playing these benchmarketing games and you'll gain a lot more credibility.