The Samsung 950 Pro PCIe SSD Review (256GB and 512GB)

by Billy Tallis on October 22, 2015 10:55 AM ESTNVMe Recap

Before diving into our results, I want to spend a bit of time talking about NVMe (Non-Volatile Memory Express) as a command set for PCIe-based storage first. NVMe has been in the OEM and enterprise space for over a year, but it's still very much a new thing in the end-user system builder space due to the fact that NVMe drives were not available through regular retail channels until now. So let's start things by spending a moment to recap what NVMe is, how it works, and why it is such an important improvement over AHCI for SSDs.

Traditional SATA drives, such as mechanical hard drives or SSDs, are connected to the system through a controller sometimes referred to as a Host Bus Adapter (HBA) or Host Controller. This is powered through the south bridge of the system, which hosts the input/output and talks to the main processor. On the "upstream" side of the HBA to the main processor is a PCI Express-like connection, and on the downstream side from the chipset to the drives are SATA links. Almost all SATA controllers, including the ones built in to the chipsets on motherboards, adhere to the Advanced Host Controller Interface (AHCI) standard, which allows them to all work with the same class driver. SATA drives connected through such controllers are accessed using the ATA command set, which maintains a standard language for all drives to use.

Early PCIe SSDs on the other hand either implemented proprietary interfaces requiring custom drivers, or they implemented older AHCI and ATA command set and as such appeared to the OS to be a SATA drive in every way (except for the 6Gb/s speed limit). As a transition mechanism to help smooth out the rollout of PCIe SSDs, using AHCI over PCIe was a reasonable short-term solution, however over time AHCI itself became a bottleneck to what the PCIe interface and newer SSDs were capable of.

| NVMe | AHCI | |

| Latency | 2.8 µs | 6.0 µs |

| Maximum Queue Depth | Up to 64K queues with 64K commands each |

Up to 1 queue with 32 commands each |

| Multicore Support | Yes | Limited |

| 4KB Efficiency | One 64B fetch | Two serialized host DRAM fetches required |

To allow SSDs to better take advantage of the performance possible through PCI Express, a new host controller interface and command set called NVMe was developed and standardized. NVMe's chief advantages are lower latency communication between the SSD and the CPU, and lower CPU usage when communicating with the SSD (though the latter usually only matters in enterprise scenarios). The big downside is that it's not backwards-compatible: in order to use an NVMe SSD you need an NVMe driver, and in order to boot from an NVMe drive your motherboard's firmware needs NVMe support. The NVMe standard has now been around long enough that virtually every consumer device with an M.2 slot providing PCIe lanes should have NVMe support or a firmware update available to add it, so booting off the 950 Pro poses no particular trouble (as odd as it sounds, some older enterprise/workstation systems may not have an NVMe update, and users should check with their system manufacturer).

Meanwhile on the software side of matters, starting with Windows 8.1 and Windows 10, Windows has a built-in NVMe driver that implements all the basic functionality necessary of NVMe for everyday use. But basic is it; the default NVMe class driver is missing some features necessary for things like updating drive firmware and accessing some diagnostic information. For that reason, and to accommodate users who can't update to a version of Windows that has an NVMe driver available from Microsoft, most vendors are providing a custom NVMe driver. Samsung provided a beta version of their driver, as well as a beta of their SSD Magician software that now supports the 950 Pro (but not their previous M.2 drives for OEMs). Almost all of the features of SSD Magician require Samsung's NVMe driver for use with the 950 Pro.

Finally, some of our benchmarking tools are affected by the switch to NVMe. Between most of our tests, we wipe the drive back to a clean state. For SATA drives and PCIe drives using AHCI, this is accomplished using the ATA security features. The NVMe command set has a format command that can be specified to perform a secure erase, producing the same result but requiring a different tool to issue the necessary commands. Likewise, our usual tools for recording drive performance during the AnandTech Storage Bench tests don't work with NVMe, so we're using a different tool to capture that data, but the same tools to process and analyze it.

Measuring PCIe SSD Power Consumption

Our SSD testbed is now equipped to measure power consumption of PCIe cards, and we're using an adapter to extend that capability to PCIe M.2 drives. M.2 drives run on 3.3V where most SATA devices use 5V, but we account for that difference by using reporting power draw in Watts or milliWatts. This review is our first look at the power draw behavior of a PCIe drive and our first opportunity to explore the power management capabilities of PCIe drives. To offer some points of comparison, we're re-testing our samples of the Samsung SM951 and XP941, earlier PCIe M.2 drives that were sold to OEMs but not offered in the retail channel.

This analysis has turned up some surprises. For starters, the 950 Pro's power consumption noticeably increases as it heats up - indicating that the heat/leakage effect is enough of a factor to be measurable here - and I've seen its idle power climb by as much as 4.5% from power on to equilibrium. Pointing a fan at the drive quickly brings the power back down. For this review, I made no special effort to cool the 950 Pro. Samsung ships it without a heatsink and assures us that it has built-in thermal management capabilities, so I tested it as-is in our standard case scenario with the side panel removed.

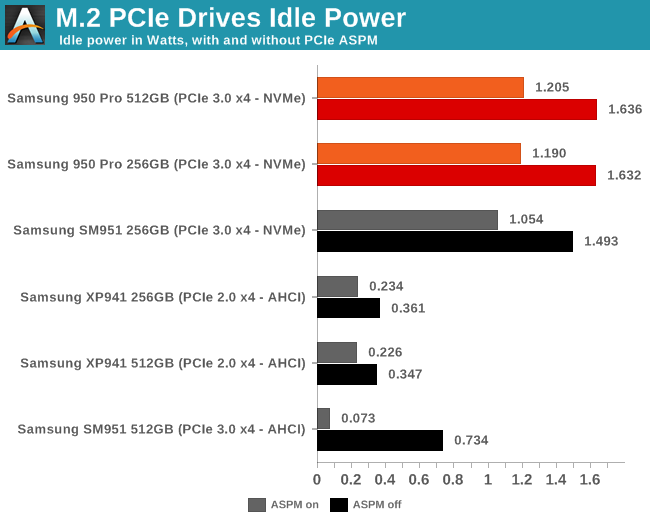

It appears that Samsung's NVMe drives have much higher idle power consumption than the AHCI drives, even when using the same UBX controller. It's clear that our system configuration is not putting the 950 Pro or the SM951 with NVMe into a low power state when idle, but the cause for what that happens is not clear. The power levels reported in the graph below are all attainable even before the operating system has loaded and they don't improve any once Samsung's NVMe driver loads, which points to an issue with either the drive firmware or the motherboard firmware.

Furthermore, we have a clear indication of at least one motherboard bug. PCI Express Active State Power Management (ASPM) is a feature that allows a PCIe link to be slowed down to save power, something that is quite useful for a SSD that experiences long idle periods. ASPM can be activated in just the downstream direction (CPU to device) or in both directions. The latter is what offers significant power savings for a SSD. Our testbed motherboard offers options to configure ASPM, but when enabling the more aggressive bidirectional ASPM level, it locks up very frequently. I tried to test ASPM on my personal Haswell-based machine with a different motherboard from a different vendor, but it didn't offer any option to enable ASPM.

Using a slightly older Ivy Bridge machine with an Intel motherboard, I was able to confirm that the 950 Pro doesn't have any issues with ASPM, and that it does offer significant power savings. However, I wasn't able to dig for further power savings on that system, and all of the power measurements reported with the performance benchmarks in this review were performed on our usual testbed with ASPM off, as it has been for all previous reviews.

Motherboard power management bugs are tragically common in the desktop space, and devices that incorrectly implement ASPM are common enough that it is seldom enabled by default. As PCIe peripherals of all kinds become more common, the industry is going to have to shape up in this department, but for now consumers should not assume that ASPM will work correctly out of the box.

142 Comments

View All Comments

Der2 - Thursday, October 22, 2015 - link

Wow. The 950. A BEAST in the performance SHEETS.ddriver - Thursday, October 22, 2015 - link

Sequential performance is very good, but I wonder how come random access shows to significant improvements.dsumanik - Thursday, October 22, 2015 - link

Your system is only as fast as the slowest component.Honestly, ever since the original x-25 the only performance metric I've found to have a real world impact on system performance (aside from large file transfers) with regards to boot times, games, and applications is the random write speed of a drive.

If a drive has solid sustained random write speed, your system will seem to be much more responsive in most of my usage scenarios.

950 pro kind of failed to impress in this dept as far as I'm concerned. While i am glad to see the technology moving in this direction, I was really looking for a generational leap here with this product, which didn't seem to happen, at least not across the board.

Unfortunately I think i will hold off on any purchases until i see the technology mature another generation or two, but hey if you are a water-cooling company, there is a market opportunity for you here.

Looks like until some further die shrinks happen nvme is going to be HOT.

AnnonymousCoward - Thursday, October 22, 2015 - link

> Your system is only as fast as the slowest component.Uhh no. Each component serves a different purpose.

cdillon - Thursday, October 22, 2015 - link

>> > Your system is only as fast as the slowest component.>Uhh no. Each component serves a different purpose.

Memory, CPU, and I/O resources need to be balanced if you want to reach maximum utilization for a given workload. See "Amdahl's Law". Saying that it's "only as fast as the slowest component" may be a gross over-simplification, but it's not entirely wrong.

xenol - Wednesday, November 4, 2015 - link

It still highly depends on the application. If my workload is purely CPU based, then all I have to do is get the best CPU.I mean, for a jack-of-all-trades computer, sure. But I find that sort of computer silly.

xype - Monday, October 26, 2015 - link

Your response makes no sense.III-V - Thursday, October 22, 2015 - link

I find it odd that random access and IOPS haven't improved. Power consumption has gone up too.I'm excited for PCIe and NVMe going mainstream, but I'm concerned the kinks haven't quite been ironed out yet. Still, at the end of the day, if I were building a computer today with all new parts, this would surely be what I'd put in it. Er, well maybe -- Samsung's reliability hasn't been great as of late.

Solandri - Thursday, October 22, 2015 - link

SSD speed increases come mostly from increased parallelism. You divide up the the 10 MB file into 32 chunks and write them simultaneously, instead of 16 chunks.Random access benchmarks are typically done with the smallest possible chunk (4k) thus eliminating any benefits from parallel processing. The Anandtech benchmarks are a bit deceptive because they average QD=1, 2, 4 (queue depth of 1, 2, and 4 parallel data read/writes). But at least the graphs show the speed at each QD. You can see the 4k random read speed at QD=1 is the same as most SATA SSDs.

It's interesting the 4k random write speeds have improved substantially (30 MB/s read, 70 MB/s write is typical in SATA SSDs). I'd be interested in an in-depth Anandtech feature delving into why reads seem to be stuck at below 50 MB/s, while writes are approaching 200 MB/s. Is there a RAM write-cache on the SSD and the drive is "cheating" by reporting the data as written when it's only been queued in the cache? Whereas reads still have to wait for completion of the measurement of the voltage on the individual NAND cells?

ddriver - Thursday, October 22, 2015 - link

It is likely samsung is holding random access back artificially, so that they don't cannibalize their enterprise market. A simple software change, a rebrand and you can sell the same hardware at much higher profit margins.