NVIDIA Develops NVLink Switch: NVSwitch, 18 Ports For DGX-2 & More

by Ryan Smith on March 27, 2018 1:20 PM EST

Back in 2016 when NVIDIA launched the Pascal GP100 GPU and associated Tesla cards, one of the consequences of their increased server focus for Pascal was that interconnect bandwidth and latency became an issue. Having long relied on PCI Express, NVIDIA’s goals for their platform began outpacing what PCIe could provide in terms of raw bandwidth, never mind ancillary issues like latency and cache coherency. As a result, for their compute focused GPUs, NVIDIA introduced a new interconnect, NVLink.

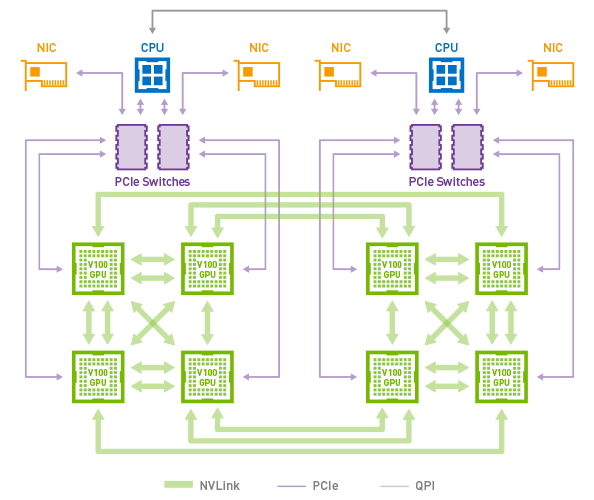

With 4 (and later 6) NVLinks per GPU, these links could be teamed together for greater bandwidth between individual GPUs, or lesser bandwidth but still direct connections to a greater number of GPUs. In practice this limited the size of a single NVLink cluster to 8 GPUs in what NVIDIA calls a Hybrid Mesh Cube configuration, and even then it’s a NUMA setup where not every GPU could see every other GPU. Past that, if you wanted a cluster larger than 8 GPUs, you’d need to resort to multiple systems connected via InfiniBand or such, losing some of the shared memory and latency benefits of NVLink and closely connected GPUs.

8x Tesla V100s in a Hybrid Mesh Cube Topology

For practical reasons, going with an even larger number of NVLInks on a single GPU is increasingly impractical. So instead NVIDIA is doing the next best thing – and taking the next step as an interconnect vendor – by producing an NVLink switch chip.

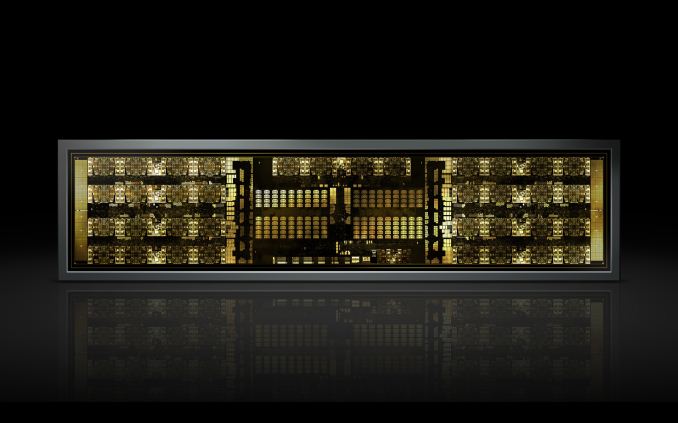

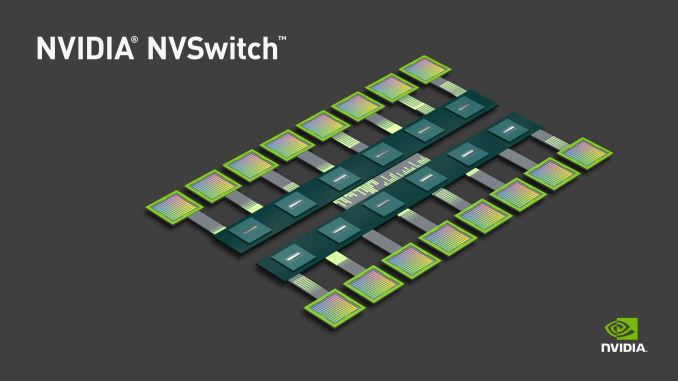

The switch, aptly named NVSwitch, is designed to enable clusters of much larger GPUs by routing GPUs through one or more switches. A single switch has a whopping 18 full-bandwidth ports – three-times that of a GV100 GPU – with all of the ports in a fully connected crossbar. As a result a single switch has an aggregate of 900GB/sec of bidirectional bandwidth.

The immediate goal with NVSwitch is to double the number of GPUs that can be in a cluster, with the switch easily allowing for a 16 GPU configuration. But more broadly, NVIDIA wants to take NVLink lane limits out of the equation entirely, as through the use of multiple switches it should be possible to build almost any kind of GPU topology. Consequently the NVSwitch is something of a “spare no expense” project for the company; this is embodied by the transistor count of the chip, which weighs in at around 2 billion transistors. This makes it larger than even NVIDIA’s entry-level GP108 GPU, and considering this is just for a switch, all of this amounts to somewhat crazy number of transistors.

Unfortunately while NVIDIA has announced the bandwidth numbers for the NVSwitch, they aren’t yet talking about latency. The introduction of a switch will unquestionably add latency, so it will be interesting to see just what the penalty is like. Staying on-system (and with short traces) means it should be low, but it would diminish the latency advantages of NVLink somewhat. Nor for that matter have power consumption or pricing been announced.

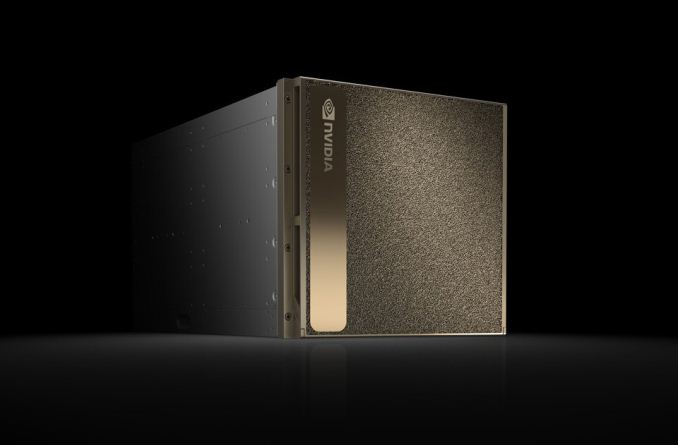

DGX-2: 16 Tesla V100s In a Single System

Unsurprisingly, the first system to ship with the NVSwitch will be a new NVIDIA system: the DGX-2. The big sibling to NVIDIA’s existing DGX-1 system, the DGX-2 incorporates 16 Tesla V100 GPUs. Which as NVIDIA likes to tout, means it offers a total of 2 PFLOPs of compute performance in a single system, albeit via the more use-case constrained tensor cores.

NVIDIA hasn’t published a complete diagram of the DGX-2’s topology yet, but the high level concept photo provided indicates that there are actually 12 NVSwitches (216 ports) in the system in order to maximize the amount of bandwidth available between the GPUs. With 6 ports per Tesla V100 GPU, this means that the Teslas alone would be taking up 96 of those ports if NVIDIA has them fully wired up to maximize individual GPU bandwidth within the topology.

Notably here, the topology of the DGX-2 means that all 16 GPUs are able to pool their memory into a unified memory space, though with the usual tradeoffs involved if going off-chip. Not unlike the Tesla V100 memory capacity increase then, one of NVIDIA’s goals here is to build a system that can keep in-memory workloads that would be too large for an 8 GPU cluster. Providing one such example, NVIDIA is saying that the DGX-2 is able to complete the training process for FAIRSeq – a neural network model for language translation – 10x faster than a DGX-1 system, bringing it down to less than two days total.

Otherwise, similar to its DGX-1 counterpart, the DGX-2 is designed to be a powerful server in its own right. We’re still waiting on the final specifications, but NVIDIA has already told us that it’s based around a pair of Xeon Platinum CPUs, which in turn can be paired with up to 1.5TB of RAM. On the storage side the DGX-2 comes with 30TB of NVMe-based solid state storage, which can be further expanded to 60TB. And for clustering or further inter-system communications, it also offers InfiniBand and 100GigE connectivity.

| NVIDIA DGX-2 Specifications | |||

| CPUs | 2x Intel Xeon Platinum (Skylake-SP) | ||

| GPUs | 16x NVIDIA Tesla V100 | ||

| System Memory | Up To 1.5 TB DDR4 (LRDIMM) | ||

| GPU Memory | 512GB HBM2 (16x 32GB) | ||

| Storage | 30TB NVMe, Upgradable to 60TB | ||

| Networking | 8x InfiniBand EDR/100GigE | ||

| Power | 10,000 Watts | ||

| Size | ? | ||

| GPU Throughput | FP16: 480 TFLOPs FP32: 240 TFLOPs FP64: 120 TFLOPs Tensor (Deep Learning): 1.92 PFLOPs |

||

Ultimately the DGX-2 is being pitched at an even higher-end segment of the deep-learning market than the DGX-1 is. Priced at $399K for a single system, if you can afford it (and can justify the cost) you’re probably Facebook, Google, or in the same league thereof.

22 Comments

View All Comments

invinciblegod - Tuesday, March 27, 2018 - link

Is it not $1.5 million dollars?olde94 - Tuesday, March 27, 2018 - link

nope he spend quite a lot of time on saying: only 400k!which is EXTREMELY awesome!

mode_13h - Wednesday, March 28, 2018 - link

Well, it's more than double what a DGX-1 costs. Of course the GFLOPS/$ won't scale linearly, but it's not exactly awesome. You'd better have workloads that won't fit 8x V100's, if you're buying this.olde94 - Tuesday, March 27, 2018 - link

will this technology only work in the DGX-2 enclosure or can we see consumer cards connected as we had with the sli bridge?Ryan Smith - Tuesday, March 27, 2018 - link

You wouldn't need an NVSwitch to link consumer cards. You could just directly bridge them using their native NVLinks, like the Quadro GP100/GV100 does. That said, there's current;y no sign of NVLink coming to consumer graphics GPUs (it's a lot of pins and a lot of real estate).mode_13h - Wednesday, March 28, 2018 - link

Titan V has the connector, but it's not enabled.iteratix - Tuesday, March 27, 2018 - link

...But how does it mine crypto?Yojimbo - Tuesday, March 27, 2018 - link

Current algorithms don't seem to need fast GPU to GPU communications. I guess if someone designed an algorithm that needed hundreds of GB of memory to be loaded at a time in such a way that it couldn't be broken up into separate chunks when processing...mode_13h - Wednesday, March 28, 2018 - link

In the Q&A someone asked him about crypto. He said crypto isn't as dependent on system architecture as AI, which is true. I mean, you just have to look at how well rigs do with x1-lane connected GPUs to see that it's not about GPU-GPU connectivity.He also poured a decent helping of scorn on the whole crypto craze, but I don't know if that was just pandering to their other customers. By now, miners are probably used to all the trash talk.

Yojimbo - Wednesday, March 28, 2018 - link

"In the Q&A someone asked him about crypto. He said crypto isn't as dependent on system architecture as AI, which is true"I think what he meant by that is that crypto does not benefit from software and system ecosystem development, so NVIDIA is not interested in going after the market. In AI, for instance, the development of CuDNN, TensorRT, their GPU Cloud registry, etc., enables NVIDIA to add value to the sale of their hardware, differentiating their offerings from the competition and creating a platform. That enables more predictable demand and higher margins. The opportunity for that does not exist in crypto. It is just a chip business, and NVIDIA doesn't want to be a chip company.

"He also poured a decent helping of scorn on the whole crypto craze, but I don't know if that was just pandering to their other customers."

I don't think he's just pandering to other customers with his negative statements about crypto. He probably sees crypto as more of a nuisance than anything at the moment. First of all, it doesn't lend itself to product differentiation which would lead to a competitive advantage for NVIDIA if they execute well, as mentioned in my above paragraph. Secondly, it is helping their competition more than it is helping them. Thirdly, it is volatile and unpredictable. Fourthly, that volatility interferes with NVIDIA's ability to address what they view as their long-term and high value markets.