The Next Generation Open Compute Hardware: Tried and Tested

by Johan De Gelas & Wannes De Smet on April 28, 2015 12:00 PM ESTVisiting Facebook's Hardware Labs

We visited Facebook's hardware labs in September, an experience resembling entering the chocolate factory from Charlie and the Chocolate Factory; though the machinery was far less enjoyable to chew on. More importantly though, we were already familiar with the 'chocolate', in that by reading the specifications and following OCP related news, most of the systems present in their labs we could point out and name.

Wannes, Johan, and Matt Corddry, director of hardware of engineering in the Facebook Hardware labs

This symbolizes one of the ultimate goals for the Open Compute project: complete standardization of the datacenter out of commodity components that can be sourced from multiple vendors. And when the standards do not fit your exotic workload, you have a solid foundation to start from. This approach has some pleasant side effects: when working in an OCP powered datacenter, you could switch jobs to another OCP DC and just carry on doing sysadmin tasks -- you know the system, you have your tools. When migrating from a Dell to HP environment for example, the switch will be a larger hurdle due to differentiation by marketing.

Microsoft's Open Cloud Server v2 spec actually goes the extra mile by supplying you with an API specification and implementation in the Chassis controller, giving devops a REST API to manage the hardware.

Intel Decathlete v2, AMD Open 3.0, and Microsoft OCS

Facebook is not the only vendor to contribute open server hardware to open compute project either; Intel and AMD joined pretty soon after the OCP was founded, and last year Microsoft joined the party as well in a big way. The Intel Decathlete is currently in its second incarnation with Haswell support. Intel uses its Decathlete motherboards, which are compatible with ORv1, to build its reference 1U/2U 19" server implementations. These systems are seen in critical environments, like High Frequency Trading systems, where the customers want a server built by the same people who built the CPU and chipset, just so it all ought to work well together.

AMD has its Open 3.0 platform, which we detailed in 2013. This server platform is AMD's way of getting its foot in the door of OCP hyperscale datacenters, certainly when considering price. AMD seems to be taking a bit of a break improving its regular Opteron x86 CPUs, and we wonder if we might see the company bring its AMD Opteron-A ARM64 platform (dubbed 'Seattle') into the fold.

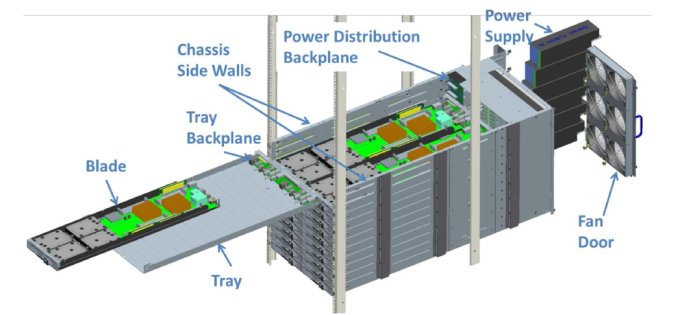

Microsoft brought us its Open Cloud Server (v2), systems that basically power all of Microsoft's cloud services (e.g. Azure), which is a high-density blade-like solution for standard 19" racks.

A 12U chassis, equipped with 6 large 140x140mm fans, 6 power supplies, and a chassis manager module carries 24 nodes. Similar to Facebook's servers, there are two node types: one for compute, one for storage. A major difference however is that the chassis provides network connectivity at the back using a 40 QSFP+ port and a 10 SFP+ port for each node. The compute nodes mate with the connectors inside the chassis, the actual network cabling can remain fixed. The same principle is applied to the storage nodes, where the actual SAS connectors are found on the chassis, eliminating the need for cabling runs to connect the compute and JBOD nodes.

A V2 compute node comes with up two Intel Haswell CPUs, with a 120 Watt maximum thermal allowance, paired to the C610 chipset and with 16 DIMM DDR4 slots to share, for a total memory capacity of 512GB. Storage can be provided through one of the 10 SATA ports or via NVMe flash storage. The enclosure provides space for four 3.5" hard disks, four 2.5" SSDs (though space is shared between two of the bottom SSD slots), and a NVMe card. A mezzanine header allows you to plug in a network controller or a SAS controller card. Management of the node can be done through the AST1050 BMC providing standard IPMI functionality, in addition a serial console of each node is available at the chassis manager as well.

The storage node is a JBOD in which then 3.5" SATA III hard disks can be placed, all connected to a SAS expander board. The expander board then connects to the SAS connectors on the tray backplane, where they can be linked to a compute node.

26 Comments

View All Comments

Black Obsidian - Tuesday, April 28, 2015 - link

I've always hoped for more in-depth coverage of the OpenCompute initiative, and this article is absolutely fantastic. It's great to see a company like Facebook innovating and contributing to the standard just as much as (if not more than) the traditional hardware OEMs.ats - Tuesday, April 28, 2015 - link

You missed the best part of the MS OCS v2 in your description: support for up to 8 M.2 x4 PCIe 3.0 drives!nmm - Tuesday, April 28, 2015 - link

I have always wondered why they bother with a bunch of little PSU's within each system or rack to convert AC power to DC. Wouldn't it make more sense to just provide DC power to the entire room/facility, then use less expensive hardware with no inverter to convert it to the needed voltages near each device? This type of configuration would get along better with battery backups as well, allowing systems to run much longer on battery by avoiding the double conversion between the battery and server.extide - Tuesday, April 28, 2015 - link

The problem with doing a datacenter wide power distribution is that at only 12v, to power hundreds of servers you would need to provide thousands of amps, and it is essentially impossible to do that efficiently. Basicaly the way FB is doing it, is the way to go -- you keep the 12v current to reasonable levels and only have to pass that high current a reasonable distance. Remember 6KW at 12v is already 500A !! And thats just for HALF of a rack.tspacie - Tuesday, April 28, 2015 - link

Telcos have done this at -48VDC for a while. I wonder did data center power consumption get too high to support this, or maybe just the big data centers don't have the same continuous up time requirements ?Anyway, love the article.

Notmyusualid - Wednesday, April 29, 2015 - link

Indeed.In the submarine cable industry (your internet backbone), ALL our equipment is -48v DC. Even down to routers / switches (which are fitted with DC power modules, rather than your normal 100 - 250v AC units one expects to see).

Only the management servers run from AC power (not my decision), and the converters that charge the DC plant.

But 'extide' has a valid point - the lower voltage and higher currents require huge cabling. Once a electrical contractor dropped a piece of metal conduit from high over the copper 'bus bars' in the DC plant. Need I describe the fireworks that resulted?

toyotabedzrock - Wednesday, April 29, 2015 - link

48 v allows 4 times the power at a given amperage.12vdc doesn't like to travel far and at the needed amperage would require too much expensive copper.

I think a pair of square wave pulsed DC at higher voltage could allow them to just use a transformer and some capacitors for the power supply shelf. The pulses would have to be directly opposing each other.

Jaybus - Tuesday, April 28, 2015 - link

That depends. The low voltage DC requires a high current, and so correspondingly high line loss. Line loss is proportional to the square of the current, so the 5V "rail" will have more than 4x the line loss of the 12V "rail", and the 3.3V rail will be high current and so high line loss. It is probably NOT more efficient than a modern PS. But what it does do is move the heat generating conversion process outside of the chassis, and more importantly, frees up considerable space inside the chassis.Menno vl - Wednesday, April 29, 2015 - link

There is already a lot of things going on in this direction. See http://www.emergealliance.org/and especially their 380V DC white paper.

Going DC all the way, but at a higher voltage to keep the demand for cables reasonable. Switching 48VDC to 12VDC or whatever you need requires very similar technology as switching 380VDC to 12VDC. Of-course the safety hazards are different and it is similar when compared to mixing AC and DC which is a LOT of trouble.

Casper42 - Monday, May 4, 2015 - link

Indeed, HP already makes 277VAC and 380VDC Power Supplies for both the Blades and Rackmounts.277VAC is apparently what you get when you split 480vAC 3phase into individual phases..